In the days before Blender Conference 2022, the Animation & Rigging module organised a workshop: The Future of Character Animation & Rigging. The main participants were Brad Clark, Christoph Lendenfeld, Daniel Salazar, Jason Schleifer, Jeremy Bot, Nate Rupsis, Nathan Vegdahl, Sarah Laufer, and Sybren Stüvel. The main goal of the workshop was to produce design principles for a new animation system, and to design a set of features to make Blender an attractive animation tool for the next decade.

Blender’s animation system is showing its age. The last major update was for Blender 2.50, back in 2009. Since then a lot has been tacked on, improved, and adjusted, but the limitations of how far we can stretch it are showing. These limitations are not just technical, but also in usability, showing themselves in the complicated workflows required to even get started rigging and animating.

The Workshop

The Animation & Rigging module organised a workshop to create a vision for a new animation system. The main goal: to empower Animators to Keep Animating, for the next decade. It also serves to kick off a new project: Animation 2025, which will run from the start of 2023 to the end of 2025. More concrete planning for the project will happen in the coming month.

In the weeks preceding the workshop there were various meetings to thoroughly prepare for what was sure to become a hectic three days. You can find what was discussed in the meeting notes (2022-09-15, 2022-09-29, 2022-10-03, 2022-10-12, 2022-10-13, 2022-10-17).

We gathered info in open-for-everyone documents. In the end we had:

- 35 pages of broad ideas, answering the question “If you were given a blank slate, and could have any kind of character animation system in Blender, what would it look like?”

- 35 pages of specific issues, answering the question “What’s stopping you from animating in Blender?”

- During the workshop we produced an additional 5 x 3 metres of shared whiteboard.

All the above documents are linked from the workshop page on the Blender wiki.

The results of the workshop were presented at Blender Conference 2022. Recordings are available on video.blender.org and YouTube.

Principles

These are the design principles for a new animation system. In this section, each principle is explained, and examples are given of how we envision these principles to result in concrete animation & rigging features. In short, they are FIFIDS:

- Fast: The less you have to wait for Blender, the more creative freedom you have.

- Intuitive: Understandable, predictable tools allow artists to work faster.

- Focused: Activate flow state. Tools should help, not get in the way.

- Iterative: Celebrate exploration, allow people to change their minds.

- Direct: Have interaction & visualisation directly where you need it.

- Suzanne: Be a good Blender citizen.

Of course these intertwine. An intuitive and direct system will help to keep focused, and there are many more connections between them. Below we go deeper into each principle.

Fast

Fast is all about the speed of Blender itself. The faster it is, the more different acting beats you can try out before a shot needs to be delivered. This speed can be categorised as interaction and playback speeds.

Fast interaction means that manipulating a rig should feel real-time. This should be achieved with the final deforming mesh, and not some simplified, boxy stand-in. Playback speed concerns replaying animation: doing playblasts and frame jumps. These should be real-time, at the project’s frame rate.

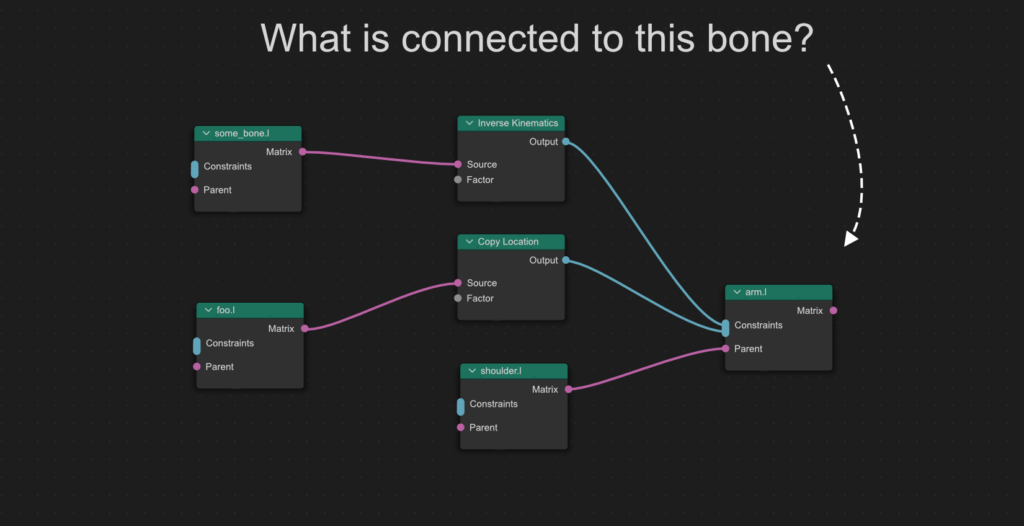

Of course these goals are not just the responsibility of the Blender development team. Regardless of how optimised Blender is, it will always be possible to create complex, slow rigs. During the workshop we thought up tools to help riggers find out how a rig works, and what makes it slow: Rig Explainer and Rig Profiler.

A schematic view of the rig can also be used to show profiling information, giving insight into how much time is spent on each node. For some of these metrics a heat map on the mesh itself could be possible as well:

Disclaimer: unfortunately there are limits to how much performance can be squeezed out of real-life computers. The above is thus a guideline, something to work towards, rather than a promise of specific performance numbers on specific hardware.

Intuitive

Understandable, predictable tools allow you to work faster. Working quickly means seeing results faster & having time to explore different things. This can be achieved with tools that are familiar from other software, but more importantly Blender should be highly self-consistent and explorable.

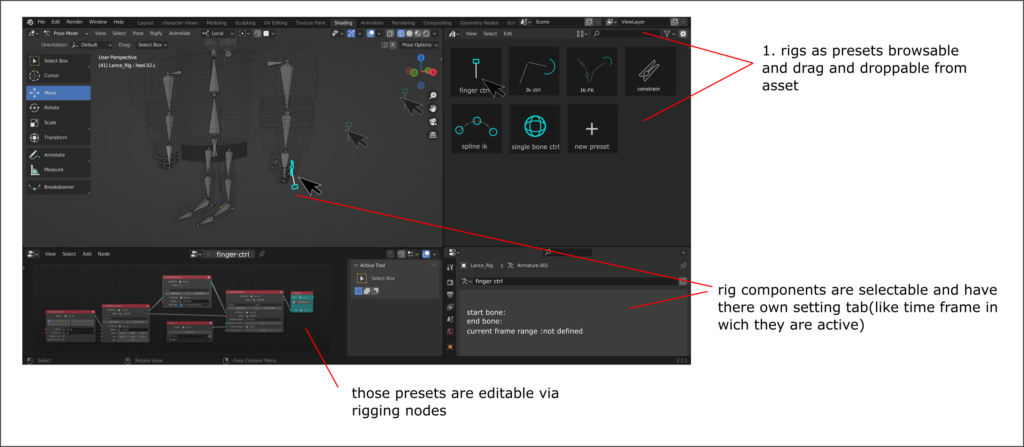

As a counter-example, to start rigging with Blender you need to know about objects, armatures, bones, and potentially also which add-ons to use, like Rigify. After that, you need to know that bone constraints are found in a different place than object constraints. Such issues could be addressed by more intuitive rigging nodes that can be applied to objects and bones alike. Rigs (or even partial rigs) could be contained in component libraries and be slapped onto a mesh or an existing rig. Fuzzy search can help finding the right components, even when you don’t know the exact wording used in Blender.

The Rig Explainer, mentioned above as a performance analysis tool, could also be used by the rigger to add notes to the rig, as documentation for other riggers and/or animators. We also want to move away from the old numerical bone layers and have named bone collections instead. This should also give rise to richer selection sets.

Temporary posing tools can make animating easier. For example, a pinning tool can be used to pin a bone in place, automatically making it an IK target, allowing for easy on-the-spot manipulation of an FK chain.

Finally there is the concept of design separation: deformation rigs, animator control rigs, mocap data, and automatic systems (muscles, wrinkles, physics simulations) should interact well together, without getting in each other’s way.

Focused

Focused is all about activating flow state. Having atomic, simple tools that can be combined for greater power. The concept of orthogonal tools also plays a role here: tools should not get in each other’s way.

Greater customizability of Blender’s interface will also help to keep animators focused, so that tools are available where they need them, in their personal workflows.

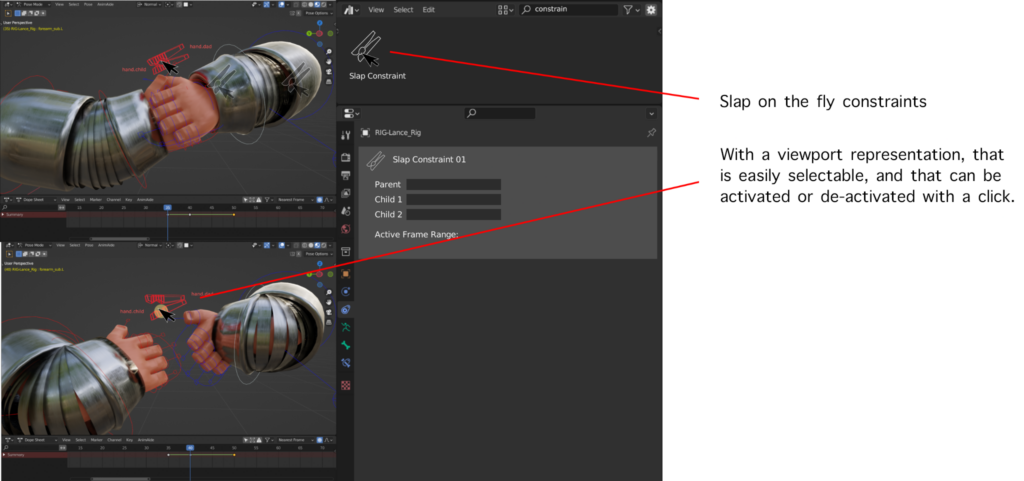

We’ve discussed visualising constraints directly in the 3D Viewport, close to the related 3D items, to avoid having to search for them in a property panel.

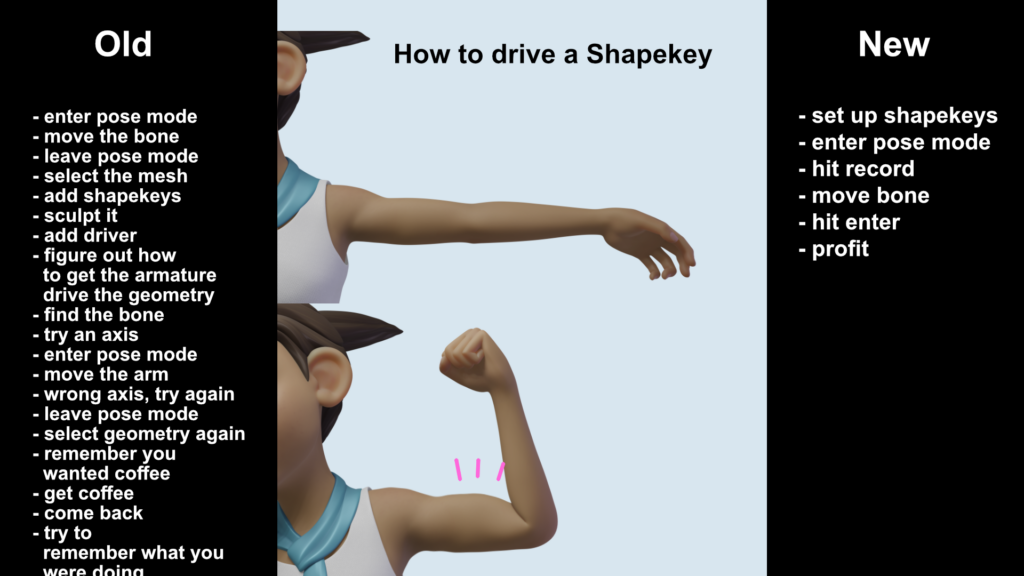

Another improvement would be example-based drivers, where you can just bend a joint and adjust the strength of a shape key, and Blender will understand how they relate and create the driver for you.

A final example would be to separate the viewport frame rate of foreground and background characters. Blender could then prioritise the foreground character when playing back animation. Background characters could update at a lower frame rate, to ensure the main focus of your work plays at real-time rates. Of course this feature would be configurable to suit your needs, and Blender would have to be told which characters it should consider “foreground” and “background”.

Iterative

Celebrate exploration. The work always evolves, and Blender should facilitate such change. Changes to the rig should be made possible without breaking already-animated shots. It should be possible to do A/B tests of alternates, from trying out different acting choices to different constraint setups. Animators should be allowed to add ad-hoc extensions to the rig, without needing to going back to the original rig file.

Concrete examples:

- A “takes” system where different “takes” can be switched across multiple animated characters & objects.

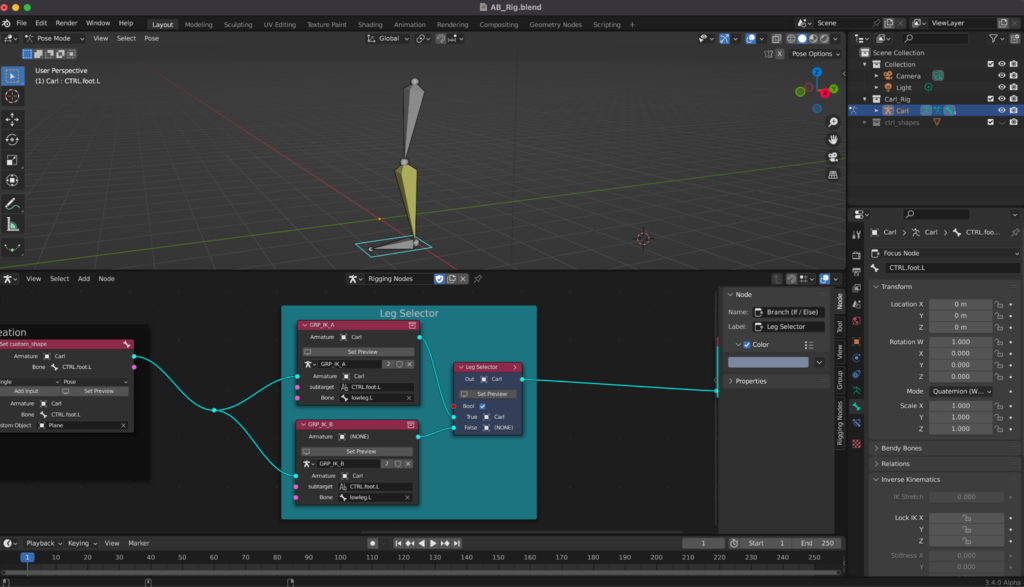

- A “branch picker” in a rigging nodes tree, to choose different versions of the constraint setup.

Direct

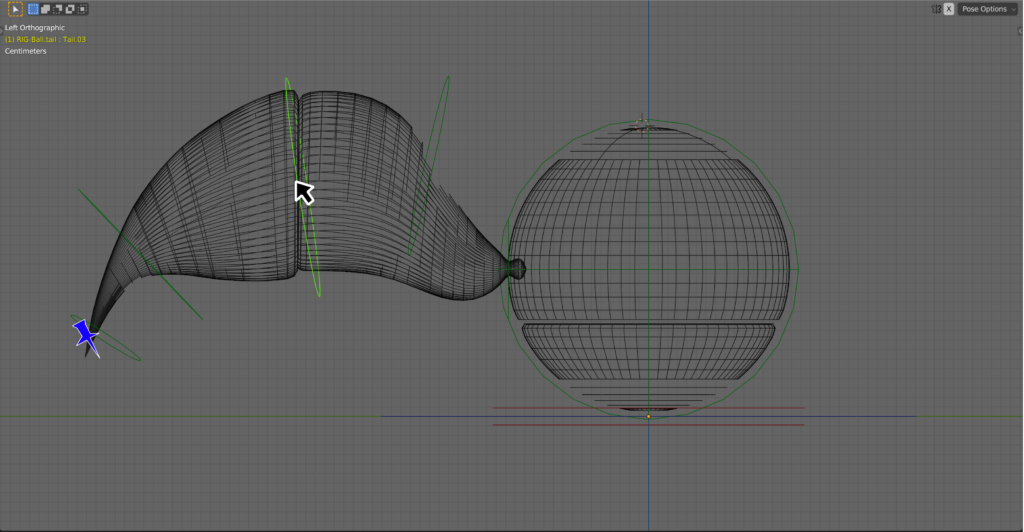

Work directly in the 3D viewport, or even in virtual reality. Mesh-based controls should make posing characters simpler: just point at what you want to move, and drag to move it. It should not be necessary to flip back & forth between the graph editor, dope sheet, and the 3D viewport. Visualisations should be editable; examples are editable motion graphs, or posable onion skins / ghosts.

We also discussed a richer system to describe the roles of bones. This way it’s easier for Blender to do “the right thing” when manipulating / visualising things. An example is an auto-IK system that knows how long IK-chains should be, due to annotations on the bones themselves. This could also avoid the need to have separate IK and FK bone chains.

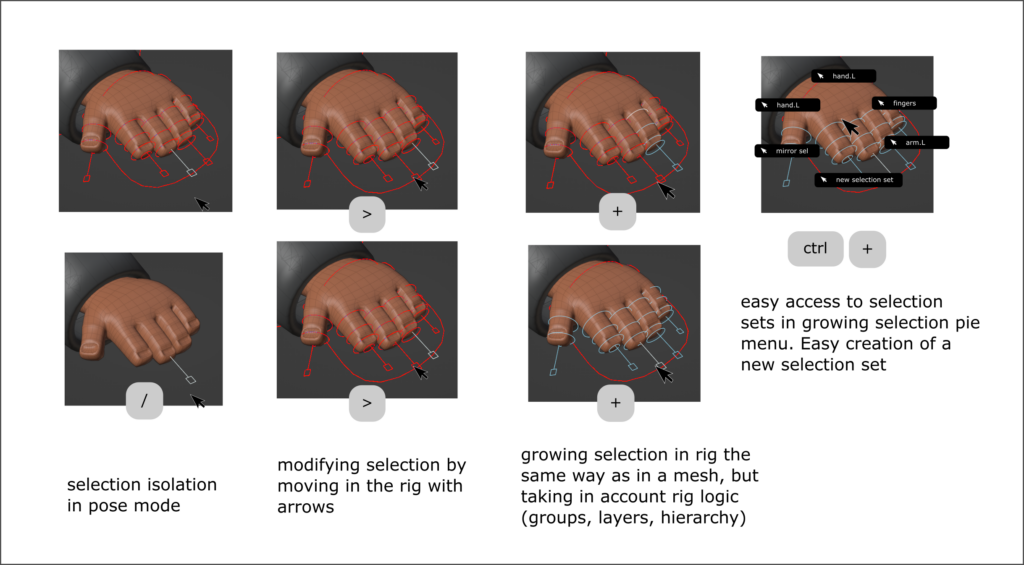

Better selection tools should allow animators to select related controls more easily. For example when a finger is selected, moving to an adjacent finger (while pressing a hotkey) should continue the selection or even the rest of the hand at that level (i.e, selecting all the finger tips). Another example is the ability to quickly select the same finger on the opposite hand.

Finally, manipulating poses across multiple frames should be possible. Think of things like adjusting a hand grabbing motion to account for a new position of a prop. Basically proportional editing across time.

Suzanne

Be a good Blender citizen. Whatever changes are made, they will fit within the existing look and feel of Blender. UI/UX should feel familiar, and shortcuts / tools should be unified within the new system. To the best of our ability, the new system will be backward compatible. Additionally, the new animation system will need to integrate well into existing “animation adjacent systems” I.e, Grease pencil, motion tracking, etc. We aren’t going to take ownership of these modules, we encourage collaboration with the module owners and community to enhance these tools. We promise to try our best not to break anything :)

Finally, every step of the way will be under the umbrella of the community. With Blender chat, module meetings, Right Click Select, Devtalk, and Phabricator / Gitea there are more ways than ever to get involved. Again, we’re creating tools for Animators. We need your help to make sure this stuff is awesome!

Project Timeline

The Animation 2025 project will start on 1 January 2023, and run until the end of 2025. The timeline below is malleable, and will likely change over the course of the project as new insights develop.

- 2023: Build prototypes, prioritising the Rig Explainer, Rig Profiler & performance, 3D Onion Skinning, and Rigging/Constraint Nodes. A few different prototypes are built, to explore different solutions. These should then be implemented in a way that compartmentalises those aspects that depend on the current implementation of Armatures, Actions, etc.

- 2024: With the new insights, reconsider the current Armatures, Actions, and Objects to allow multi-object animation, better data handling, layering of animation data, etc. Speed things up, work on more improvements.

- 2025: More usable cool stuff.

Conclusion

These plans are BIG, and we are excited to have the opportunity to work on them. Three years is likely not enough time to implement all of it, but with a team working on this for three years it’s certain to have a big impact on Blender. And, after that, we feel that the FIFIDS principles will guide the development process for a long time.

The Animation & Rigging module will still be working on fixing bugs and improving the current Blender features. New features will be merged into mainstream Blender as often as possible.

Finally, we want to address a response we’ve seen pop up here and there already, namely that these ideas are “nothing new”. We like to see this as a good thing, though: they’re not just dreams and fantasies. All those ideas are grounded in reality, showing that they can actually be done. And of course bringing those things together in an Open Source tool that is available for everybody, that is something new and exciting!

To keep track of what’s going on in the Animation & Rigging module, keep an eye on the module meeting notes, or even join a module meeting to be part of the discussions.

The Animation & Rigging module is also searching for more developers! Contact @dr.sybren in #animation-module on Blender Chat if you want to help out.

Support the Future of Blender

Donate to Blender by joining the Development Fund to support the Blender Foundation’s work on core development, maintenance, and new releases.

how do i make things

When I first started animating in blender, I was surprised people actually chose to animate in blender. It seemed sort of rebellious and prideful compared to maya. The thing that still snags me in blender is the graph-editor UI, which is why I have been using Maya recently for my Disney-style 3d career. In blender, soloing graph-lines, selecting keyframes, selecting handles, adjusting handles feels really clunky and unnecessarily difficult. I don’t understand why you can’t select multiple handles or solo a graph. I have learned a lot about how to use blender, from rigging characters with a target and a deformation rig for game engines, to the built in breakdowner, but the graph-editor is my stand-out UI problem. I didn’t see that much mention of it in this article, but with this extensive of an overall UI improvements to it will probably be included. I thought it would be a good idea to touch base with this though anyway- I could be wrong.

Go to Edit > Preferences > Animation, and set Unselected F-Curve opacity to 0.

Then, in your Graph Editor, go to View, make sure Only Selected Curve Keyframes is active.

Finally, save your Start-up File by going to New > Defaults > Save Startup File, so your settings will be saved when you open that Blender version from then on.

You can also select keyframes and handles just fine. I don’t see any complication here tbh.

Thanks for this tip with visibility soloing of the graph editor. How do I select multiple handles at once?

When bendybone can be exported in a compatible way, it will be a legend.

I can’t even use it for bindings right now because it completely fails when exporting to other software, or game engines.

People are talking about things that are too advanced.

I just hope to get a simpler tool for drawing weights!

Just like the sculpting tool, add weights, hold Ctrl to reduce weights, hold Shift to smooth weights, and hold Alt to draw weight gradients.

Would also like to have a smarter weight locking option that takes into account the locked weights when normalizing the weights.

But these was little mention of it in these meetings.

One more thing, if I’m working on one character’s controller, when I want to work on another character’s controller at the same time, I have to switch to object mode, then select another character’s controller, and then put them both into pose mode together.

If I want to add another box object as a temporary reference , I need to go back to object mode, then create a new box, then select the two characters, and then go back to pose mode. Wait? You can’t select a box to animate in skeleton’s pose mode, so go back to object mode, select the box, animate it, select the two characters’ controllers, and then go back to pose mode.

This completely stopped me from animating in blender.

It’s so comforting to see that geometry nodes will be involved in animations.

Since I’ve started using Blender in 2014, i’ve never been interested in current blender animation system, especially for making game animations.

But now, i’ll wait for 2025 for the release of this new system, which will completely transform the workflow of animations in this incredible software.

The real time playback speed is a must have.

I’d love to get involved with ideas of my own for improving the overall experience.

Ideas we have in abundance. Developers is what we need.

Amazing where this is going and how far blender has come ♥ I am looking forward to the new changes allowing so much more for the body and movement! THANK YOU to all who have brought Blender to this level ♥ ♥ ♥

This is the future of animation! How cool is technology! Very inspiring!

This is really exciting. I’d love to see some features similar to the procedural animation system CAT in 3ds max. Its buggy as hell but the ability to create very specific walk and run cycles with zero keyframes is incredible. It also allows automatic action transfer/retargeting from armatures that are completely different. Here’s a quick demo of what’s possible- https://youtu.be/R5NmiXpqBsQ

Thanks you

Now make a goal for Modeling too.

Modo is the best modeling tool for a reason.

Great!

I agree 300% percent, but I regret that the proposal is only oriented towards character animation.

There is much more to animate than characters.

Motion, simulation, physics, music, rhythms, materials etc. are part of the animation mix.

And actually, the software is sorely lacking in flexibility and productivity when the scenes are not conventional (i.e. like “Disney way”)

The easiest way to stall development is to chew off too much, and to try and change too many things at the same time.

If you’re willing to help out with development, that would be excellent! Come join one of the module meetings, they’re open to anybody. Check https://devtalk.blender.org/c/animation-rigging/21 for past meeting notes & the agenda of the upcoming meeting.

Great news but only 2 suggestions:

1) Make the rigging agnostic, procedural, and node based is key to becoming a standard. Vertical nodes are better because the shape like the character being deved.

2) Maya is the de facto animation software that is LOVED and USED by animators.

Rumba (Presto equivalent) is full of amazing modern ideas too.

DONT try to reinvent the full wheel by pride or ideology and get inspired by these 2 sotwares a lot. If they are the top animation tools in the world it is for a reason.

Dont forget the beloved SOFTIMAGE

I don’t know if I read that correctly but I think they’re adding muscles (unless I’m Presley dumb and muscles already exist in blender)

I’m really excited for these plans and seeing how everything comes together, especially the rigging nodes.

My only request for things to consider and put some emphasis on building these tools would be to make sure the rigs/animations can be more compatible with game engines than they currently are in Blender. Getting blender to export to unreal has a lot of issues that takes a lot of painful trial and error of hitting checkboxes and re-exporting/importing over and over until it finally seems to work.

This is a more minor concern but often importing rigs from other programs looks really bad, such as rigs imported from Maya. If there was some way to build in a compatibility layer on import to correct for errors that would be useful. Even if its some way of letting me write a python script to preprocess the bone data in some way before it actually finishes the rig import. I know the priority isn’t to do things the way other companies do it, but easing these pain points for a lot of people who have already committed many years in other programs would help them transition to using Blender in their workflow instead of resisting it. Also being able to re-use years worth of pre-existing rigs from other programs would be very valuable for a lot of people.

Just some personal thoughts, obviously you don’t have to do any of it but for me these are a few of the pain points I have with Blender in my current workflow.

Not a developer, but as far as I know this relies on proper reading and writing to FBX. Being a closed format, it had to be retroengineered to even arrive at the importer and exporter we have now. Either Autodesk open-sources FBX or another (open) format should replace it gradually. This is my understanding of the issue

Yes, Unreal unfortunately is currently pretty tightly bound to the FBX format if you want to import skinned meshes. I have heard it mentioned once on an Unreal livestream that they are working on a new importer but I don’t know actually where in the source that is or what the goals of it are.

There used to be some movement towards supporting gltf in unreal, but I believe the main developer who used to work on it at Epic is no longer an employee there.

I am a programmer but I have no expertise about the specifics with licensing issues or the specifics on how FBX is implemented in blender/unreal. It’s a really big task, I’m not overlooking that side of it and Blender is ultimately free software at the end of the day.

I just want to make sure that these use cases are being discussed because it is a huge problem for developing in Unreal and therefore a huge problem for people who want to use Blender but are unwilling to because of these kinds of issues. These are ultimately all solvable problems if given the right effort. I’m also willing to help make it happen too, but the first step is getting people talking about it.

> My only request for things to consider and put some emphasis on building these tools would be to make sure the rigs/animations can be more compatible with game engines than they currently are in Blender.

Improving interoperability is of course always good. The point is HOW. What could Blender change to make this happen? And what would have to be done on the game engine side? What are the current problems, what is their root cause, and what would be a FIFIDS solution?

I’m not asking these questions to get answers here in the comments, but rather to illustrate the questions that I’d have when tasked with this topic.

Hey thank you for replying, its good to know you guys actually read this stuff.

This is something I would actually like to help out on the development side if possible.

I can only speak to my own experiences and I can start logging the issues when they come up in the future to get more specific. But a lot of the issues I have stem from the export process feeling like a black-box. Blender has its own way of representing data in the outliner but this isn’t necessarily how every other program does it.

While it is good that the armature is separate from the mesh which is separate from pose inside the outliner. It makes it hard to understand what happens upon export from a pure data perspective. What is the name of the actual root node? Is an extra one created?

There is a common feature in game engines called Root Motion which translates any animation offset data from the root-most node of the skeleton into the actual character moving instead of just the visual skeletal mesh. This currently is really difficult to export correctly because of the lack of insight into how it is actually exporting as. Blender seems to always append a root bone named after the name of the Armature component.

Unreal has built in a hack on import to account for this which is if you name your root bone root it will actually remove the first index so that it can enable root motion.

It’s mentioned in numerous threads online, it’s really hard to track down the correct solutions, neither program documents this in official docs either.

https://developer.blender.org/T63807

—

A possible solution I would propose that I believe is in-line with the idea of everything nodes would be to be able to define ‘export nodes’. Houdini has this as a feature I think is extremely useful. It allows you to setup an export in multiple different formats with different settings saved in independent files or be saved as re-usable assets later on. It still suffers from the visibility problem but it at least saves your settings so you can try multiple versions of export settings and you can name the nodes differently so you don’t have to remember this giant list of options.

They also retain selection of what to export and end filepath.

Example you can make an export nodes named like:

‘UE4 Modular Static Mesh Export – X Game project’

‘UE4 Character Export – X Game project – Rig Only’

‘UE4 Character Export – X Game project – Animation Only’

The naming does exist in blender currently in the form of operator presets but exporting different objects changes the singleton style behavior that the export window is set to, breaking flow state of just saying, OK this thing is modified, now just export it, even though you already did all the setup of what preset it should be, where it was saved in the past, you lose this data every time you work with a new object or file within a scene and export that.

—

There are other ideas like if there was a way to preview an outliner as you’re setting up options, or view scale of the result or see the converted axes on the bones visually.

While it’s an interop thing I do believe these things still align with the goals for having FIFIDS solutions.

Fast: Exporting is a slow process, and this can result in hours of extra work in my workflow per day

Intuitive: Currently with these hidden operations and inability to store multiple export settings easily and switch between them, differences in outliner data view between programs, it makes the process very difficult to understand and not intuitive.

Focused: Currently because it is such a haphazard process it doesn’t allow for a flow state, although it is not a task directly done inside the animating process itself, for games this is the process of iterating on animations and it does ruin the flow.

Iterative: The singleton style behavior of the export window currently makes it very difficult to iterate on these assets. When switching between files or contexts in this workflow you lose your settings and have to remember to reset them every time.

Direct: Also currently very indirect because you can only see the end results of the process once it is already done and you have imported the result into a game engine where you see the errors and have to backtrack your way to a solution in Blender.

I forgot to put the last S for Suzanne, be a good blender citizen.

I believe these changes would be congruent with the proposed goals of everything nodes. And you don’t need to destroy the current export window. An export node would ideally just use the same settings/interface but be able to save those settings per export instance as assets or inside the .blend file itself.

There are also many use cases beyond games of course. Simulation caches, storing multiple different states of objects, different versions. A test video playblast export vs a finished one, etc.

This is dope. Really excited to see some editable motion trails. Its crazy that this isnt a feature in Maya or a Maya plugin already.

Did you mean Blender ? It’s a standard feature in Maya

I want a soft body solver specifically for muscle fat

I agree this things !!! Is really needed.

This is really exciting. I love that it’s so wide-reaching.

I think this is all very very exciting and can’t wait to test the tools out. Every featute will be a godsend.

In order to prevent spam, comments are closed 7 days after the post is published. Feel free to continue the conversation on the forums.