A few weeks ago (2-6 May), a workshop focused on the Video Sequence Editor was held. The main participants were Richard Antalík, Sergey Sharybin, Sebastian Parborg and myself. The main goal of the workshop was to review and confirm key design tasks as part of the VSE roadmap.

Francesco Siddi

Here is an overview of the topics discussed.

For several other ongoing topics (preview transforms, tilting, etc) help from the community is always welcome.

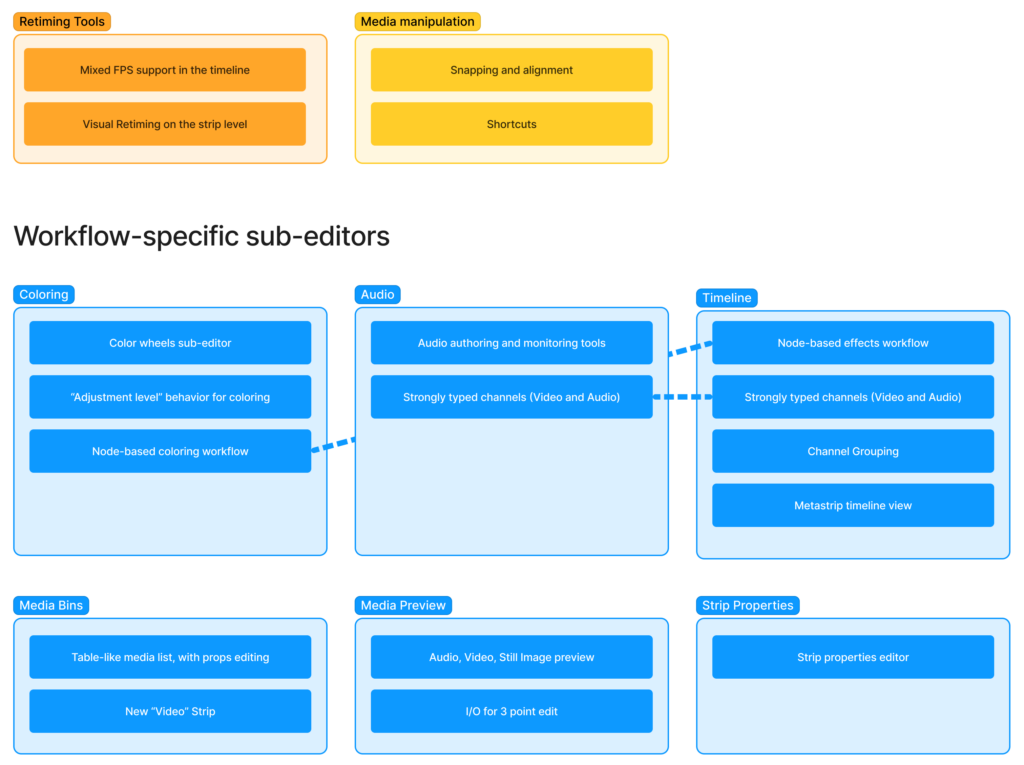

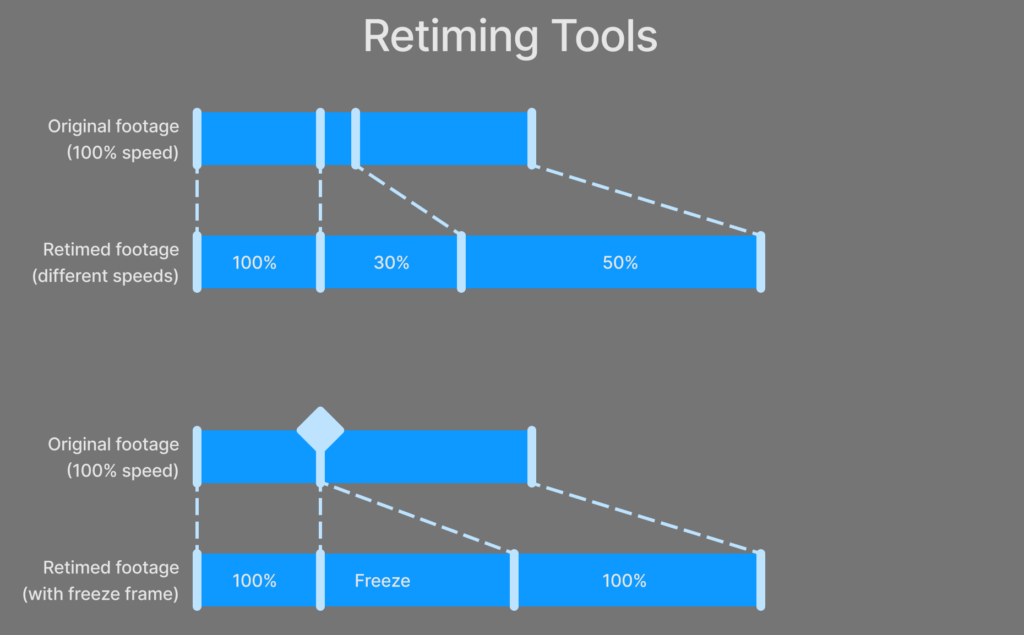

Retiming Tools

The currently ongoing –and highest priority– topic is “Retiming Tools”. This relates to how Blender handles footage with a different frame rate from the current scene, as well as tools and settings to retime video strips. While this functionality is already available through the speed effect, the idea is to replace it with a strip-level property and drop the effect strip.

Manipulating the timing of a video strip should be visual, clearly showing when footage is being stretched (slow down), squashed (speed up) or frozen.

Media Manipulation

Recent Blender versions introduced media manipulation in the preview area, making it easier to quickly transform multiple video or image sources in the frame. Some more work is required to complete this effort, by introducing further shortcuts (for example to clear transforms or duplicating) and snapping. Community contributions are welcome!

Workflow-specific sub-editors

Most of the workshop’s time was focused on reviewing and re-defining existing workflows, as well as envisioning brand new workflows that would help artists create and handle more complex projects.

Most of these workflows should happen in dedicated sub-editors.

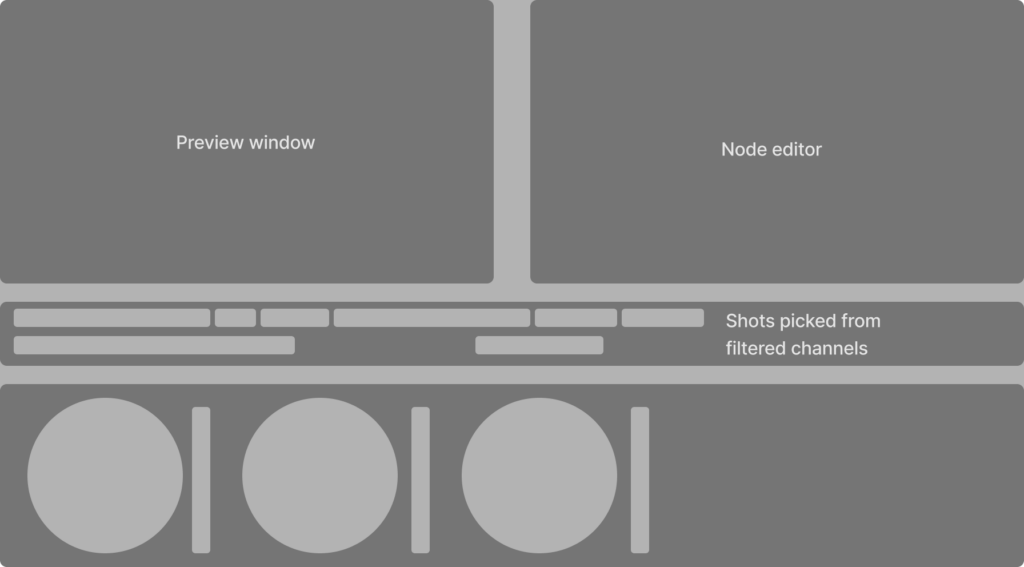

Coloring

A dedicated editor, featuring quality color wheels, possibly with a horizontal layout should be introduced. This editor will allow a shot-by-shot “adjustment level” behavior, typical of the coloring stage. Adjustments can be combined, layered and masked through a node-based interface.

Audio, and Typed Channels

As experienced by the Blender Studio team, building complex multi-channel sound edits is quite limiting, because some authoring and monitoring tools are simply missing. The main examples are channel-wide automations and metering tools.

Introducing such features will likely require the introduction of a new concept in the VSE core design: Typed Channels. Typed channels will support either video level content (video, images, color adjustment) or audio (sound strips). Having typed channels allows the definition of tailored UI elements to perform tasks such as global, per track volume, effects and panning automation and monitoring volume levels through visual meters.

Sequencer Timeline

Next to the introduction of Typed Channels, further work is needed to extend the functionality of channels. For example, the introduction of an “active channel” will allow to channel-wide edit to strips as well as keyboard accessibility for muting, locking or tagging/grouping channels.

Channel grouping is also an important feature that will help manage large scale edits. For example, it should be possible to group related video or audio channels, and have different timeline layouts based on them. Groups should be considered more like tags, and not like a hierarchy.

Finally, a new metastrip view should be introduced. This allows viewing the content of a metastrip without losing context of the timeline.

Media Bins

Already on the roadmap for a while, the need for a dedicated list of media in an edit has been reconfirmed. This should be combined with the creation of a new “Video” strip, which would use as source a reference to the media list (instead of a file path). This allows for more accessible media management.

Previewing Media

A Media View sub-editor should be established, where to preview images, video and sound before inserting them into the timeline. This pattern is very common in video editing software, and it’s a fundamental element of the 3-point-edit workflow.

Strip Properties

Finally, the topic of strip properties editing was discussed, focusing on the creation of a dedicated sub-editor for this task. This would provide a more spacious interface for editing strip properties, compared with what is currently possible in the properties sidebar of the VSE timeline. However, the topic of strip properties editing can also be solved in different ways (node-based editing, on-canvas editing, etc.) so this needs further investigation.

Design Principles

During the workshop all fundamental VSE principles were upheld, in particular:

- Playback should always be realtime

- All video editors should always be responsive and interactive

- The VSE Timeline should be considered a canvas, giving video editors the freedom and flexibility on where to place strips

Conclusions

It was the first time for Richard visiting the Blender HQ, and it was the first time that a meeting focused on the future of the VSE was held. Overall a very useful experience, showing how much potential this set of editors still have. While a timeline has not been established, it’s clear that these tasks will take months to complete. Contributions, feedback and comments on the above discussed topics are welcome!

Support the Future of Blender

Donate to Blender by joining the Development Fund to support the Blender Foundation’s work on core development, maintenance, and new releases.

Great news that blender is developing a video editing tool.

One feedback that I would like to give is that not everyone’s computer can always present the edit in real time and they might run into problems, a pre render sequence option where the footage is rendered in a lower quality codec for previewing exploits would be a very nice addition.

And one more thing, Blender offers great customizability features for its users in its normal 3d workspace, more than any other 3D software I’ve ever used and I have been using 3D software since lightwave on the Amiga. It would be very nice if the modularity and workflow customization options that exists within the 3D workplace carry over to the Video Editor . that’s one of the things that sets premier on top of other video editors, the fact that you can change so much and work in whatever ways you want even if the software is periptery it still offers that flexibility.

it would be cool to have not only a flat color layer in the VSE but a gradient layer.

I think of it like a color plane, a 2d colorramp where we can add colorpoints on the canvas (+ button in the n panel of the strips and then LMB click on the canvas to place the active color) and blender interpolates the colors between those. And colors include transparency for easy masking.

for 2 colors a linear and a radial gradient are useful, maybe still for 3 colors, but for 3+ colors a radial gradient can become funkey. but maybe it is still doable with blender and even usable for the user :)

Great to see developement here. Please consider doing faster video rendering. It is really slow at the moment compared to other video editors (on a Ryzen 7 4800H, 16gb RAM). Thanks for your effort

Strongly typed channels are a very good idea as mostly any (professional) video editing software makes a difference between audio and video channels.

It would be great if the UI was implemented by using “seperate” timelines for audio and video (video on the top, audio on the bottom, video channels grow to top while audio channels grow to the bottom) just as Davinci Resolve, Premiere Pro, Olive, KDEnlive, and many others do. This makes it a lot easier to deal with many channels.

These is a really great proposal, I´m excited for the VSE. I would love to see Blender develop a competent video editor with all the bells and whistles needed for a full workflow. I think it would be worth the effort.

Color is something I feel quite strongly about, and I would like to make a proposal to the color wheels. I´ll give a brief outline here, but I would love to detail it somewhere else. Is there someplace suitable for such a proposal?

The lift-gamma-gain wheels are of course tried and tested, and gets the job done. However, there are more flexible workflows nowadays, where you got more than three wheels, and each wheel can be tuned for a specific luma range. These wheels would be called something like black-dark-shadow-light-highlights. Ideally the amount of wheels are flexible, and can be added or removed at will. If they are flexible, the classical lift-gamma-gain could be the default, with a list of presets for those of us that needs more wheels. Also, not present in the mockup, each wheel should have its own saturation slider, maybe on the left side of the wheel.

If someone could point me to the best place to type out a proposal for Blender I’d appreciate it.

I want to see those features:

– integration of FFmpeg’s libavfilter which includes minterpolate (motion interpolation) filter

– VSE IO addon should be included and enabled by default

– HDR / Wide-Color Gamut (DCI-P3/Rec. 2020) support

– Automatic Color Matching for primarily color correction

I think if Blender’s VSE is planned to have big changes, these are things that I’d suggest to be added:

1. More tools in the Sidebar aside from Tweak, and Blade like

• Multi-select – Selects multiple clips without having to press the Shift key.

• Stretch – Acts like a trimmer too, but works in a different way. When a strip is stretched, it will get longer, but slower. On the other hand, when a strip is stretched inwards, the strip will shorter, but plays faster. So this is basically a quick “change speed” tool;

2. Different editing workspaces. Other editing software out there has this thing. Examples: Editing, Color, Audio, etc;

3. Easier masking;

4. Multicam;

5. Audio Level Panel;

6. Audio EQ;

7. In-App Audio Recording;

8. Left-and-Right Audio Keyframable Panning; and

9. Improved Scopes UIs.

And also some things that I’d suggest be improved:

1. Improve the performance of playback when a video/image strip has a Modifier; and

2. Improve playback performance when there’s a scope window opened

It is an unprecedented idea to add complete tuning functions to modeling and animation software.

But professional tuning software, I think only FAB FILTE can meet my requirements.

Because the FAB filter not only has equalizer reverberation, but also has dynamic processing functions such as compression and expansion, its function is to adjust the volume accurately.

I also think of a function with less other tuning software, FX that changes one sound waveform into another approximate effect based on convolution operation, such as making human voice add metal effect.

Would it be an option to use other OS applications (like Audacity) for the audio editing?

Like, integrate specific applications for specific tasks?

While this is already possible to a certain extent, the goal of this design is to offer an integrated solution.

This add-on will let you round trip single audio strips or the full edit in Audacity: https://github.com/tin2tin/audacity_tools_for_blender

If you really want to turn Blender into a complete editing software, instead of simply outputting 3D scenes and rendering them into a folder.

I hope:

one. Video.

1.OFX: compatible with OFX plug-ins for video color blending.

2. Transition node: node-based transition preset asset library.

3. Adjustment layer: a track adjusts the colors of all tracks below, and this color adjustment function also has nodes.

Second, audio frequency.

1. VST plug-in for audio processing.

2. High-speed audio interface: only WASAPI is not enough, but also requires multi-channel IO such as ASIO and Coreaudio, as well as monitoring route assignment. If I have a MOTU 8pre, or midas M32, I must need these to work with multiple microphones or headphones.

3.FX send: like the video adjustment layer, such as two people talking in a tunnel, need two the same reverberation, professional tuning software only need to add a FX track, and then send other tracks in.

About the Adjustment Layer itself, there was already in Blender.

I mean, adjustment layers can use nodes.

If you’re aiming at “pattern is very common in video editing software, and it’s a fundamental element of the 3-point-edit workflow” maybe you’ll find the homework the KDENlive folks did to map out fundamental elements of NLE editing interesting: https://kdenlive.org/en/video-editing-applications-handbook/ (And note the VSE currently have plenty of non-industry-standard oddities which are alienating new users).

If this is “mostly a list of topics that are considered strategic for the future of the VSE. Detailed breakdowns and planning on the tasks have not been agreed upon.”, you should not ask for user contributions until the tasks are clear.

And what about VSE 2.0 project then? As a follower of this project, I’m interested. There is still many not fild checkboxes. Is it over? Do they replace each other? Or these plans won’t interfere with goals VSE 2.0?

The VSE 2.0 task should be updated. There is no conflict between what’s mentioned in this article and the goals of that task.

Thanks, great to hear that you are thinking about future improvements for the Blender video workflow.

Did you also discuss possibilities to move the video processing pipeline to the GPU? I think translating the effects and video processing to shaders might result in major performance improvements, as this is all done on the CPU currently. Wouldn’t HIP-API be an idea as it was announced to be used by Blender anyway? It would also allow the code to run on CPU and GPU.

Ideally the video pipeline could reuse the real time mechanisms for the Eevee compositor? In general a video is not much more than a plane with a video texture on it.

Great to know that VSE is getting better improvement.

My concern is how vse will preview STRIP SCENE.

A NEW Node editor will be created for vse color grading or are you going to use the compositor node editor?

Request:

* Create two point Track or a mask for a selected strip.

* Make VSE independent of scene.

* If possible create a new NODE EDITOR for audio effects (EQ , ECHO, REVERB, etc) eg: FL studio PATCHER)

The idea is introduce a dedicated editor, but this still need to be tested and validated.

Oh, this is great. The VSE is already so much better than a couple years ago (previews, overwrite…). Why the decision to design a dedicated editor for nodal color adjustment when we have the compositor ? Can’t we make it so the user links a compositing node tree to a specific VSE strip ?

Compositor and video editor have different goals. The interface for editing effects could be the same, but the specs are different. Linking compositor output to a VSE strip could be considered only once the compositor (and renderer) can produce near-realtime results.

Great to hear that there has been some communication on the future of the VSE, but what was actually decided on? And why wasn’t any of the UI team in that meeting?

Ex. what is the actual design for the retiming of strips? How is the speed to be illustrated on the strips? (There was a patch to draw the curve of the resulting frame and freeze frame areas ages ago)

When you ask for help from contributors, you’ll need to be much more specific than this: “For several other ongoing topics (preview transforms, tilting, etc) help from the community is always welcome.” & “Recent Blender versions introduced media manipulation in the preview area, making it easier to quickly transform multiple video or image sources in the frame. Some more work is required to complete this effort, by introducing further shortcuts (for example to clear transforms or duplicating) and snapping. Community contributions are welcome!” (There actually was a contributor patch for additional preview functions including shortcuts ages ago)

When discussing color grading workflows, to me it doesn’t make much sense unless this can be done on video in 10+ bit color space. Was this discussed?

Most of the topics discussed here include UI, why wasn’t anyone from the UI team present?

How does “The VSE Timeline should be considered a canvas, giving video editors the freedom and flexibility on where to place strips” fit with “Typed Channels”? If the channels are typed, I guess you can only place ex. sound strips in audio channels? Or should the content of the channels determine the channel type?

As for media management, the asset browser enables most of the described functionality, however sequencer content should either be converted into the existing data blocks or have data block categories of their own. The asset browser could include a video preview were in and out points can be set before import into the sequencer. Was this discussed?

A lot of work on the Storyboarding has been done from the GP team, but on the VSE side what was discussed on this topic? Ex. better integration of the scene strips, caching and previews?

And most importantly, is the VSE module going to work as a module with monthly meetings, plans, contributor inclusion, and decisions actually being made?

This is mostly a list of topics that are considered strategic for the future of the VSE. Detailed breakdowns and planning on the tasks have not been agreed upon. Also, before talking about UI, there are several aspects of the UX/workflows that need to be investigated and reviewed. At this moment there are no monthly meetings planned.

All these seems quite good improvement.

I have been usign blender as video editor for quite a long time and knowing it is still in developer’s foqus is great.

What blender is really missing though (and wasn’t in the past) is the ability to export in apple prores format. If blender is intended to be used as video editor, having prores back (or at least an equivalent transcode fortmat option, which i don’t know) is mandatory.

If i undestand correctly blender uses ffmpeg (wich supports reading and writing of prores format) but the prores output has been disabled intentionally (guess for copyright reasons?) in the output panel.

Not sure on when this the prores coded was dropped.

Consider having a look at the commit history for the export settings and reach out to the person who did.

i don’t have time to bisect in search of the exact commit, but for sure 2.73 was able to export in quicktime with 3 options equivalent to pores:

uncompressed_10-bit_4:2:2

uncompressed_8-bit_4:2:2

uncompressed

dropping this support means that if you want to edit video sequences instead of frame sequences, quality is compromised since all other available compression codecs / containers can’t bypass re-compression on export/import.

i also found a patch (rejected) and the thread with seems to clarify developers opinion about this.

https://developer.blender.org/T35615

I’m still not interested in the new features of Blender movie editor. The most troublesome problem is.

If you use Blender to finish the whole process from modeling to editing, you suddenly find that there is a place where the model is pierced, then press F12 to run RayTracing from beginning to end.

Therefore, I strongly recommend adding the features I proposed in the previous review.

https://devtalk.blender.org/t/movie-editors-suggestion/24567

Bear in mind that the future of media is not to bake multiple clips of media into a single new piece of media. The point at which the programme is composited will be at playback. So playback can be suited to the audience. Variables include: language(s) spoken, number of people watching, size and aspect ratio of playback device, visual abilities, audio abilities of audience. Editing is the preparation of metadata in how to present a variety of pieces of media. To accompany that media in a database.

Don’t fall into the trap of thinking editing is about helping put things in tracks. The track (or strip) metaphor was needed because computers in the 80s and 90s were limited.

these are huge improvements for the VSE, which is great. Are there any deadlines already defined for these, or will this be discussed in a further meeting?

No deadlines for now, this is mostly an overview of the topics discussed.

If you want to do the editing software thing I think good transitions and effects presets, including better video transcoding tools are still necessary

In order to prevent spam, comments are closed 7 days after the post is published. Feel free to continue the conversation on the forums.