Disclaimer

This document was built on top of the development and research lead by Julian Eisel (Severin) as part of the Google Summer of Code 2016. This proposal is a usability amendment to his design with the viewport project in sight. See the original documentation [here].

Introduction

The current layer system in Blender is showing its age. It’s been proved effective for simple scene organizations, but it lacks flexibility when organizing shots for more convoluted productions.

The layer system for Blender 2.8 will integrate workflow and drawing requirements. It will allow for organizing the scene for a specific task, and control how different set of objects are displayed.

The following proposal and design document tries to address the above by unifying the object and render layers and integrating them with the viewports and edit modes.

For the rest of the document, whenever we refer to a layer we are talking about the new layer system that replaces all previous layer implementations in Blender. Since in the future we will have other kind of layers (e.g., animation) the layer here referred will be renamed soon (view layer? render layer? workflow layer?).

Data Design

Here is a proposal for how to separate data, and integrate the layer concept with scene data. In 2.8 we will split the scene in layers, and each layer will have a mode and an engine to use for drawing. Each viewport can show a different layer.

Data Separation

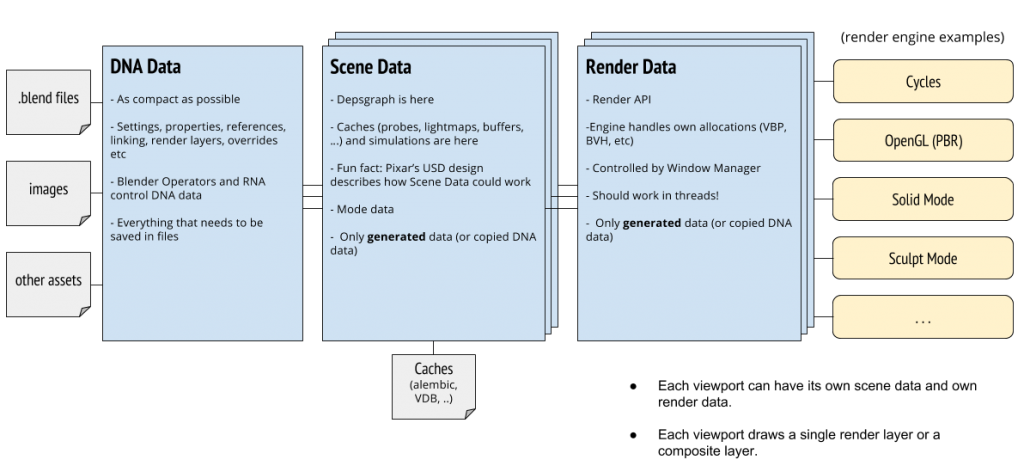

DNA data (that what gets saved and what we work on), Scene data (based on animation or scripts), and Render Data (engines) should have an explicit separation. In 2.8 we will split viewport rendering into many smaller ‘engines’.

(Data diagram revised from original data design by Sergey Sharybin and Ton Roosendaal)

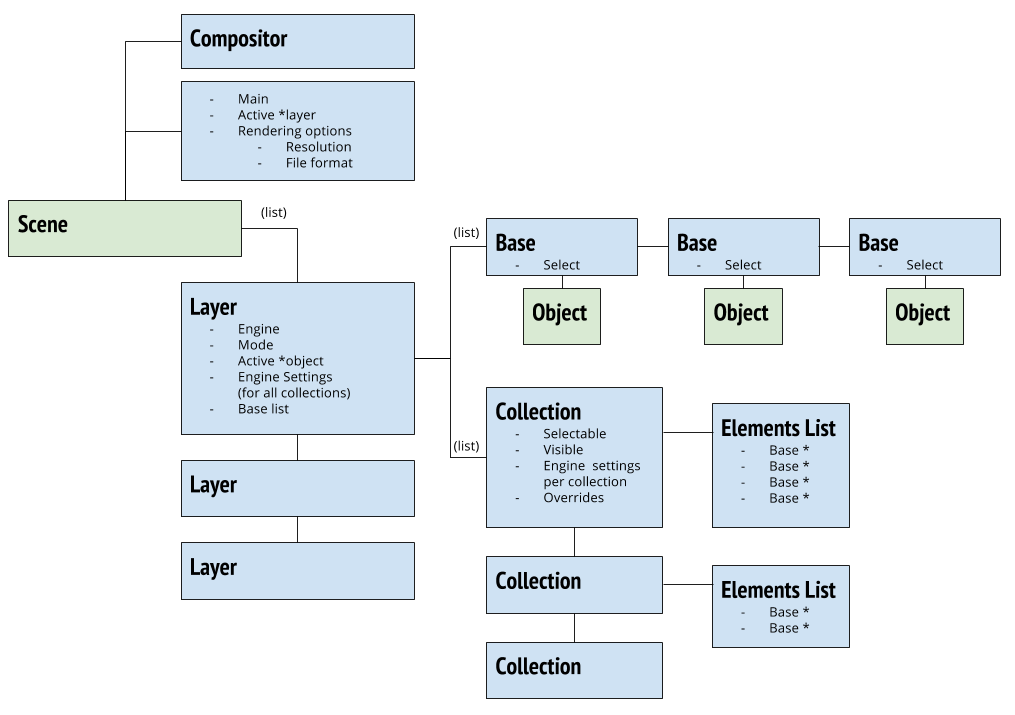

Scene and Layer

Each layer will have its own active object and selected objects. We drop the scene base, and guarantee there is always a layer in the scene (as we do for Render Layer now). A scene also has an active layer, which is the one used to determine the “global” mode for the other editors.

Render or Draw Pipeline

- Active Layer

- Collections define visibility, overrides, light!

- Update layer list (or make copy)

- Send to depsgraph (or use cached)

- Send scene data to engine

- Draw per-mode specific tools

- Draw or render

1 render layer = 1 image (multi-view, passes)

Layers

A layer is a set of collections of objects (and their drawing options) required for specific tasks. You can have multiple layers in the scene for compositing effects, or simply to control the visibility and drawing settings of objects in different ways (aiming at different tasks).

A layer also has its own set of active and selected objects, as well as render settings. Few render settings are kept at scene such as dimensions, views and file format.

A scene can have multiple layers, but only one active at a time. The active layer will dictate which data the editors will show by default, as well as the active mode.

Collections

Collections are part of a layer and can be used for organizing files, fine tune control of components drawing and eventually overwrite support of linked and local data. They are group of objects defined individually or via name and other filtering options.

The drawing settings relative to a collection will be defined in the layer level, with a few per-collection engine specific options (matcap, silhouette, wire).

A collection has the following data: name, visible, selectable, engine settings per collection, overrides, element list.

Mode

As part of the workflow design a layer will be created with a task in mind, and part of this task is the mode used at a given time. The mode will be an explicit property of the layer.

The available modes at a time are depending on the active object. All selected objects (when possible) will enter in the same edit mode. That means an animator will be able to pose multiple characters at once. And the mode is no longer stored in the object.

Viewports

A viewport should be able to select a single layer to display at a time. However the viewport stores the least amount of data. A viewport doesn’t store the current mode, the current shading, nothing. Apart from the bare minimum (e.g., current view matrix) all the data is stored in the layer.

That also means we can have multiple viewports in the same screen, each one showing different objects, or even the same objects but with different drawing settings.

Since the settings are defined at a scene level (scene > layer > collection), and not at viewport, multiple viewports can share the same drawing settings and objects visibility. The same is valid for offscreen drawing, scene sequence strips, vr rendering, …

Viewport and Render API

This is still needs to be discussed further, but the initial idea is to re-define the Render API so that an external engine would define two rendering functions:

- RenderFramebuffer

- RenderToView

Where the latter would be focused on speed, and the former in quality. That creates a separation in the original idea of making the “Cycles-PBR”, or “Cycles-Armory shaders” as part of the Cycles implementation.

That also means we can (and will) have a new Render Engine only for nice good PBR OpenGL viewport rendering. The pending points are:

- How do we handle the external engine drawing

- Shader design (face, lines, points, transparency)

Render Engine View Drawing

There are two main options to implement this:

- Blender draws the objects using the render engine defined GLSL nodes, and some screen effects, handling probes, AO, …

- The render engine is responsible for going through the visible objects, binging their shaders, drawing them, …

Either way we will have the engine responsible for drawing the solid plates [see docs]. This then is combined with tools drawing, outlines, …

Engine such as Cycles really need to be in control of the entire drawing of its objects. However for OpenGL based engines, we could facilitate their implementations.

OpenGL Rendering Engine

A new render engine should be able to close the gap between fast viewport drawing and realism. This engine could take care of PBR materials that deliver a Cycles like quality, without having to mess with Cycles specific shade groups.

In fact this engine should have an option as to the backend used for the views. The user should be able to pick between Cycles, PBR, Unreal, Unity, … and have the texture maps corresponding to the specific engine.

Cycles itself can still deliver a RenderToView routine that is faster than what it is now (with forced simplification, …). That said, it’s not up to Cycles to implement GLSL nodes that are similar to it BSDF implementations.

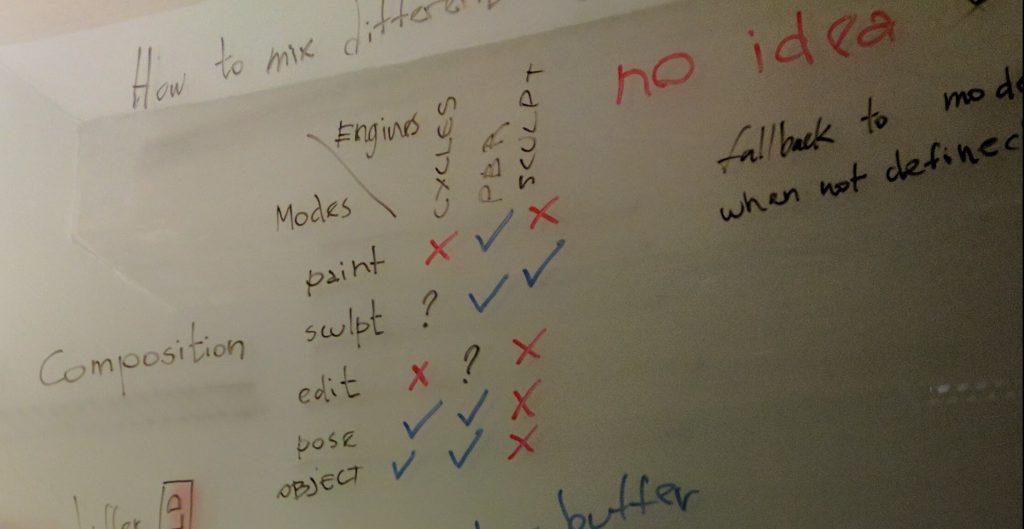

Mode Engines

We will have specific engines aimed at specific edit modes. Those engines can be used for drawing a layer exclusively, or combined with the layer render engine. For example, when using the Cycles render engine in object mode, we will have the object engine drawing nice highlights on the selected objects, as well as object centers and relationship lines.

However the object mode engine will also be accessible as a standalone engine option, bringing back a full solid drawing for the entire layer, with its own options. This also means we are dropping the current draw modes, and in particular the per-object maximum draw.

Discussion Topics

Topics for the usability workshop in November 2016.

How do we edit multiple objects?

Should we support mesh edit? Meta-balls? Maybe support for some modes only.

Grease Pencil

Since Grease Pencil is undergoing some changes, we should make sure it integrates well with this proposal

Linking

Artists will want to re-use a carefully crafted layer/collection structure. Linking wouldn’t solve that because usually they wouldn’t want the objects to be linked in as well. We could have a save/load system for layer/collections. Maybe it’s something simply doable with Python, similar to how we save/load keymaps.

Scene Override

An animator typical workflow is to have a viewport for the camera view and another one for posing. Changing settings such as simplify would now mean we need to change this setting in different layers (or collections). Or to have other two other layers with different “simplify” settings and switch between them both respectively.

New Objects

New objects in Blender are automatically added into the active layer and active collection. But should we have a way to also add them into the non-active layers and their respective collections?

Acknowledgements

Original layer management design by Julian Eisel and Ton Roosendaal and all the developers and artists who provided extensive review on [T38384].

Current design elaborated with Ton Roosendaal and feedback from Sergey Sharybin. Feedback incorporated from Blender Studio crew.

Hi! I’m not as advanced in Blender, but tell me how now to implement the composition of layers in the program?

I would really like to see denoising not located in the render layars section but actually be able to denoise per layer, object or material. It would be way more flexible than being limited to render layers for isolating only certain parts of your model to be denoised.

It would be nice to have a pick whip like in AE to link anything in Blender like in AE.

It seems every day that I wish that were a feature in blender. Imagine what that could do for rigging! Instead of finding one out of potentially hundreds of bones to hook into a constraint, you just drag the line over and it’s in!

I’d kill for that.

Grease Pencil

Can the grease pencil in version of Blender 2.8 able to draw in real time in 3D view port basically artist can sketch ideas off geometry that is already in scene to create further ideas but using the grease pencil to sketch in real time in the 3d view port for example hold down y key then can draw a perfectly straight line along y axis etc … this way a artist can draw before hand and this would help in the modeling and design process

is there option that need to be set or a add on ?

It will be a wise solution just to add Collections concept to time-proven 20 Layers and Outliner concepts.

I working with Autocad drawings with 4k layers daily, in Blender i usually use 10-15 layers of 20 for massive scenes, like city blocks from 70 multisection biuldings just easily.

Yey! Long due and very welcome (it’s an everyday nightmare getting by with 20 layers in larger scenes).

Something seems somewhat awkward, probably because it’s all new :)

Not suggestions as I’m sure things are thought out way beyond instant ideas:

1. why the differentiation into collections and layers instead of hierarchical layers?

2. Won’t layers/collections duplicate the functionality of groups? Could they replace groups?

3. Is it potentially compatible with “nodes of everything”?

What I would love, and have asked for a few times several years ago is a per object visibility control as a float, instead of boolean. (so we can animate object visibility (rather than material visibility, or having to do some extra compositing)).

The object visibility/renderability is already implemented – just need to change the variable type, and whatever implications (which are huge) for the render pipeline.

not sure if feature is available;

the better way of use the Grease Pencil (logics),

would to enable on 3D view, to type plain text for timeline annotations;

had played a little, does not look very good to write with the mouse;

so just to explain the process[…]

it is good that it goes not have into care by messing with rendering configuration being a separated workflow; seem also mostly at [for] camera view;

then perhaps a custom Blender Grease Pencil font to be easily identified as being the annotation screen text; then again marking visually key frames(logics)

[^]annotations about:: frame length;Length Transitions; [and such alike];

–>then going more complex by setting this environment;

from the annotation logics(screen text) at the keyframes;

perhaps for being able to link a annotation process as “?ghost grease frames”

on which a block process would mark as screen text where to work with the frames expected by this custom screen annotation;

then this process could be a setup to be communicated at media [like] for creating transitions with Blender; as 3d presentation and such(concept);

also by remaining the current drawing tool by marking positions and so on;

Resuming::

the Grease Pencil has a setup that can be used to annotate your transition logics that are displayed visually to easily keep track of your keyframes production;

This is a great concept. But there are a couple of things I would like to mention in an a sort of PSA to the devs who will be working on this.

1) Please don’t try to implement ALL of this at one time. Slow, steady, controllable updates and releases throughout the 2.8 series will ensure that the rest of us have a working piece of software.

2) Automagically adding objects into “the active collection” automatically upon creation is a big fat NO from me. This is just an extra step I have to do after making an asset (make sure I move it into the right collection after creating it). In fact, I would vote that no collections or maybe the original, base collection, are created unless I explicitly tell Blender to make me a new one. Implicit functions are easily the biggest pet peeves of most artists and programmers. If I didn’t tell my computer to do something, 90% of the time it shouldn’t be doing it.

3) Workflow specific tools make sense. Maya has this feature and handles it very well. However, from a UI/UX standpoint, please do not take away the customization of the current UI. Alot of these tools I think could get placed on the left toolbar which needs a MASSIVE cleanup anyway. In addition to this. I hope there will be increased support for floating, dockable windows.

4) Overrides are a great idea. But seriously. Open up Maya, look at how they do it, copy the idea. It works. Flawlessly.

5) I will personally sponsor the development work for anyone who is willing to implement light-linking It should be entirely possible now, and is entirely necessary.

– J

([email protected])

This all sounds really nice. Most of the time I have one particular beef with current layers, and I don’t know if this new design resolves it.

When creating asset (character etc.) I like to setup some lighting and make quick preview renders F12. Lights are on my way when editing and I keep them on separate layer. But to have in the F12 render, I have to make that layer visible. This is annoying, because it slows down the workflow big time.

So is the viewport layer (editing stuff) now separate from layers that are rendered with F12? Sometimes I have backgrounds and other stuff also, that I don’t want to see when editing, but want to have them on quick render lookup.

Thanks.

@vilvei,

this is possible already.

next to the dot layers in 3dview, there is an icon which is checked by default (immediately to the left of proportional editing). unchecking it separates the render layers from the working layers.

It would be great to support Katana-level lighting capacity.

http://forums.cgsociety.org/archive/index.php?t-1109797.html

e.g.

– deferred loading

– take system (rendering variations at one time)

https://www.sidefx.com/docs/houdini9.5/basics/takes

https://www.youtube.com/watch?v=c2uWlLYY_dc

Overall, love the design, think it will give users much, much more possibilities and control, and it integrates quite well with new viewport project too.

Now some thoughts and questions (food for the workshop ;) ):

1) IMHO, multiple edit for meshes/UVs/etc. is a no-go for initial work. This would imply a lot of design work in itself (on both UX and implementation sides) and, more importantly, require a lot of work adapting/rewriting all existing tools (especially afraid of transform area here, with all the complex things like snapping etc.). Think if we manage to get the sole pose editing working on multi-object level, it would already be a nice achievement for a start.

2) Scene override/new objects: Maybe I missed something here, but think this is the biggest open topic in proposed design? It also depends to what you want to do with layers (use them mostly in a ‘workflow’ aspect, or do they also have an important object-management role?).

But we could have a lot of flexibility and solve those topics by allowing layers to ‘share’ subsets of settings/data (either actual struct sharing, or through a ‘link and synchronize’ system), so e.g. layers A and B could share same collections, but have different modes or render engines, while layer C would have same render engine as B but different collections. That way, adding object while layer A is active would also automatically add it to layer B, but not to C. And the same could go for sub-collections (same sub-collection shared between several higher-level ones).

In other words, we could have ‘pools’ of render engines, modes, collections, and then each layer created by selecting items from those pools? This system should also make it easier to create new layers, rather than having to copy an existing one and then change potentially a lot of settings…

3) What about same object being added in several collections of a same ‘tree’? Do we forbid this (not sure how…)? Do we allow it, and how do we handle the ‘merge’ then, highest-level one wins? What about siblings then?

4) IO of layers/collections setup: yes, should be done through mere py scripts and RNA API.

5) Grease Pencil: think this also needs some talk to ‘merge’ it back a bit in Blender (why do we have specific GP brushes, palettes, etc? :'( ), but just as a reminder, there was the idea to make GP its own Object datatype. Not sure how much work that would be but really like that idea…

Very very interesting reading, love the direction you guys are taking for Blender 2.8 so far. Congratulations and many thanks for all the hard work, it really is appreciated.

> A collection has the following data: name, visible, selectable, engine settings per collection, overrides, element list.

Could e eventually also have per-collection snapping options or toggle?

Some times one stores very high-polygon or geometrically complex objects in one collection (say highly subdivided meshes, vegetation, fluid simulations, etc) in one layer which one hardly ever wants to snap to. But we may want to snap stuff like background scene elemens, or architectural assets, or decoration assets to the ground, walls, etc.

Being able to toggle which layers/collections to snap to would also be great :)

Thanks for this report on your progress – it’s very interesting to read about the direction you guys are taking for 2.8, and it sounds reassuringly good!

Being able to see your work in many different ways without changing the underlying data is something Blender is currently not good at, so the layer system will be very welcome. Currently, I have to play around with shader nodes or material setups for simple things like disabling lighting etc. and the multi-object plans are just the icing on the cake.

Real multi-object editing in 2.8 would be amazing. Although I usually just work on a single object at a time, there have been many times in my 2 years of Blender where I’ve wanted to be able to edit a bunch of objects at the vertex level at once, as it makes certain things like aligning vertices across multiple objects much easier than doing each one individually. Multi-UV edit is an absolute necessity – even if the multi mesh edit goal slips out of sight for some reason.

Not sure if this is relevant to the layer system, but the “viewport” part makes it sound as if it could be:

One thing that would be very useful for animating is if we could set the current frame independantly in different viewports.

For example if you animate a camera from point A to point B with two keyframes, one on frame 1 and one on frame 50.

Now you want to change keyframe 1 while being at keyframe 1. But at the same time you want to see how this keyframe change affects the camera at frame 25.

Thanks Dalai, Severin and all others who took, and will take, part of the making of this big changes, good luck.

Just let artists know as early as possible when we can already play with it.

Please, make B2.8 as versatile as Cinema 4D.

If I have not misunderstood we’ll can edit, sculpt, or animate different armor at the same time. I suppose we’ll can also edit the UV map of several objects as if it were one?

Too good, I need to see it to believe it.

If so, I suggest an option to edit/sculpt/… only the active or all selected objects.

Mesh editing may be the most complicated of modes to be supported (due to merge operations). But multi-object UV editing is a highly praised feature it would be great to support.

> If so, I suggest an option to edit/sculpt/… only the active or all selected objects.

Dully noted. Something to keep in mind if things get out of hand.

Would love to see Multi Object Editing in Blender. Weather implemented on the ground level (preferably), or even as a hack (using Join, but retaining Object Data, Materials etc, when Separated).

Re new objects added to non active layers – this would be useful for me. I often set up scenes for technical illustration by importing say .obj .dae or .lwo meshes into layers to be props to a mesh made in Blender. I arrange different combinations of layers to be active for a particular rendering with lights, backdrop, etc conveniently separated. It would be handy therefore to import or place an object into a chosen layer without isolating it (unselecting every other layer) and then re-establishing all those that are wanted. I dare say more artistic users would benefit from this capability as well. Hope that makes sense and is helpful. I will be very happy when improved layer management finally make it into Blender.

All this new capability sounds very powerful, but for those of us that won’t need or use most of it, please make it always possible to do simple things simply. The new structure should never prevent folks from doing things the “old way” without having to work around new capabilities.

In every paradigm shift, there’s always an “old way” of doing things that gets completely phased out of existence and relevance. Blender’s stilted 20 layer system being thrown in the garbage is a good thing, and I think folks will realize the objective improvements once they become acclimated to the new system.

Thanks for sharing this. I have some questions.

1. Are Collections, in other words, nested sub-layers but without some top-level settings?

2. No third level- collection within collection. Right?

3. Object can be assigned to layer alone or collection within that layer?

2. Objects can’t be assigned to multiple layers or collections. Correct?

4. In “Scene and Layer” section diagram, what exactly “Base” stand for?

5. “multiple viewports in the same screen, each one showing different objects” Don’t get this part. Users will be able to define which layers are visible in particular viewport? Or this is extension to “View Global/Local” option but on whole single layer instead of selected objects?

Regarding mesh edit on multiple objects. This sounds interestingly on paper but I think this alone is humongous task that will delay 2.8 for several months. You basically have to redesign big part of modeling workflow and code dozens of operators from scratch. Simple “make face” operator will need huge layers of logic to work as people expect. Imho only manageable way to do that for 2.8 it to disable operators that may cause problems in multi-edit-mode and handle them one by one, for whole 2.8x period.

Thanks for your interest. I have some answers :)

> 1. Are Collections, in other words, nested sub-layers but without some top-level settings?

It’s goes beyond that. But that’s their hierarchy, right.

> 2. No third level- collection within collection. Right?

Wrong, nested collections should be possible.

> 3. Object can be assigned to layer alone or collection within that layer?

Objects can only be belong to collections. Never to a layer directly.

> 4. Objects can’t be assigned to multiple layers or collections. Correct?

Wrong. The same object can be inside different collections, and even different collections from different layers.

> 5. In “Scene and Layer” section diagram, what exactly “Base” stand for?

That’s more complicated to explain. Basically base (in Blender) is a small wrapper around an object datablock that allows you to store data that is unique to your base list. For example if the object is selected in a layer, but unselected in another layer, we can’t have the “selection” property stored in the object itself, can we? So this goes into the base. Which means that each layer stores a list of bases (which point to objects).

> 6. “multiple viewports in the same screen, each one showing different objects” Don’t get this part. Users will be able to define which layers are visible in particular viewport? Or this is extension to “View Global/Local” option but on whole single layer instead of selected objects?

You will be able to define which layer (and only one layer at the moment) you will want to see in a viewport. From this layer all visible collections will be drawn.

> The same object can be inside different collections, and even different collections from different layers.

Is this really necessary? I understand why this is the case with the current layers system with limited amount of layers, but what’s the point of it when we have unlimited custom layers support?

This I can assure you is extremely necessary. This is not nesting (although nesting is to be supported). But instead this means a layer will be tweaked with a mode and render engine in mind. While other layer can still have the same objects (or a sub-set of them), but be prepared for something else.

Another example: Let’s say you work with games and need to bake textures often. You can have the active layer with the selected and active objects that you need for baking, while working in a different layer modelling the baking cage, or the highpoly components of your model, or texture painting it. You can even have yet another layer that only display the resulted of the low poly mesh with the baked texture on it.

But anyways I understand your concern. And we will try to have use cases driving the need for customization and options.

I think I got it now. And I like it! Few more questions to clarify.

1. Layers mostly control HOW to draw and render. They are extension of current RenderLayers, include render/draw settings currently found in Scene and Viewport.

2. Collections control mostly WHAT to draw. They are extension of current Dot Layers but feature naming, unlimited number, nesting etc. Current layer visibility is done here. But better. ;)

I see only one issue here. Deleting Layers and Collections. There is design how to handle that? Imagine this situation:

I have scene with 2 objects.

LayerA with CollectionX include only Object1.

LayerB with CollectionY include only Object2.

What will happen when I delete LayerB? Object2 won’t be visible anywhere until I find it in regular outliner and assign it to Layer and Collection manually? Will I be asked where to move this object(s)? Will it automatically pop up in CollectionX?

Since new layers won’t be just regular RenderLayers but also (and mainly for many) control viewport visibility we need to handle hidden objects with care. This is no issue now since we have 20 hardcoded layers and cannot delete them.

I think Layers should contain persistent Collection that will contain all scene objects that are not present in user-made collections, in other words hidden objects. This mean a Layer will always contain all scene object and deletion of other Layers/Collections won’t affect it. Users will be able to temporarily turn visibility of this collection (current tilde key) to select given objects and move them to other collections.

This persistent collection will be hidden by default of course.

So, if you have multiple objects in different layers, you won’t be able to see them at the same time any more?

I forgot to address this bit:

> “(…) mesh edit on multiple objects (…) will delay 2.8 for several months.

Right. Not everything in this proposal need to be addressed at the same time. As long as the foundation is solid we can tackle some of the proposed changes over the course of 2.8 development. If a change would require too many features to be broken it can always be developed in a separated branch.

In order to prevent spam, comments are closed 7 days after the post is published. Feel free to continue the conversation on the forums.