It seems like it’s still unclear what the widget project actually is and what it means, so it’s really time to make things a bit more clear. Especially, to justify why this project is important for the Blender user interface.

Sidenote: Since I’m currently also working on normal widgets like buttons, scrollbars, panels etc, I actually prefer to call the widget project ‘custom manipulator project’. Just to avoid confusion.

– Julian

Blender suffers from an old disease – the Button Panelitis… which is a contagious plague all the bigger 3d software suites suffer from. Attempts to menufy, tabify, iconify, and shortcuttify this, have only helped to keep the disease within (nearly) acceptable limits.

We have to rethink adding buttons and panels radically and bring back the UI to the working space – the regions in a UI where you actually do your work. This however, is an ambitious and challenging goal.

Here, the widgets come into play! Widgets allow to tweak a property or value with instant visual feedback. This of course, is similar to how ‘normal’ widgets like buttons or scrollbars work. The difference is, that they are accessible right from the working space of the editors (3D View, Image Editor, Movie Clip Editor, etc). It is simply more intuitive than having to tweak a value using a slider button that is in a totally different place, especially when the tweaked value has a direct visual representation in the working space (for instance, the dimensions of a box).

Being able to interact with properties, or in fact with your content, directly, without needing to search for a button through a big chunk of other buttons and panels, is like UI heaven. This is how users should be able to talk to the software and the content they create in it. Without any unnecessary interfaces in-between. Directly.

Blender already has a couple of widgets, like the transform manipulator, Bézier curve handles, tracking marker handles, etc. But we want to take this a step further: We want to have a generic system which allows developers to easily create new and user friendly widgets. And, we want the same to be accessible for Add-on developers and scripters.

It’s also known that Pixar’s Presto and DreamWorks’ Apollo in-house animation tools make heavy use of widgets.

In Blender, there are many buttons that can be widgyfied (as we like to call it): Spot-lamp spot size, camera depth of field distance, force field strengths, node editor/sequencer backdrop position and size, …

Also, a number of new features becomes possible with a generic widget system, like a more advanced transform manipulator, or face maps (groups of faces) with partially invisible widgets, similar to the ones from the Apollo demo.

Actually, all these are already implemented in the wiggly-widgets branch :)

The spot size widget (GIF – click to play)

The spot size widget (GIF – click to play)

Face map widgets (GIF- click to play)

Face map widgets (GIF- click to play)

Current state of the project:

The low-end core can be considered as quite stable and almost ready now. Quite a few widgets were already implemented and the basic BPY implementation is done.

The next steps would be to polish existing widgets, create more widgets and slowly move things towards master. It’s really not the time to wrap things up yet, but we’re getting closer.

Focus for the next days and weeks will likely be animation workflow oriented widgets, like face maps or some special bone widgets (stay tuned for more info).

A bit on the technical side:

Widgets are clickable and draggable items that appear in the various Blender editors and they are connected to an operator or property. Clicking and dragging on a widget will tweak the value of a property or fire an operator – and tweak one of its properties.

Widgets can be grouped into widgetgroups that are task specific. Blender developers and plugin authors alike, can register widgetgroups to a certain Blender editor, using a system very similar to how panels are registered for the regular interface. Registering a widgetgroup for an editor, will make any editor of that type display the widgets the widgetgroup creates. There are also similarities to how layouts and buttons function within panels. The widgetgroup is responsible for creating and placing widgets, just as a panel includes code that spawns and places the buttons. Widgetgroups also have a polling function that controls when their widgets should be displayed.

For instance, the transform manipulator is a widgetgroup, where individual axes are separate widgets firing a transform operator. Just as every 3D editor has a toolbar, registering a manipulator widgetgroup with the widgets used for object transform, will create those widgets for every 3D editor.

Needless to say, plugins can enable their own widgets this way and tweak their widgetgroups to appear under certain circumstances through the poll function, as well as populate the editors with widgets that control the plugin functionality.

(A more detailed and technical design doc will follow, this is more like a quick overview)

So! Hopefully this helped to illustrate what the custom manipulator/widget project is, and why it matters.

It is a really promising approach to reduce UI clutter in button areas and for bringing the user interface back to the viewports. Work with your content, not with your software. It’s about time.

– Julian and Antony

Any news on this tool? I haven’t been able to find a build of this or updates on this anywhere

Any news on what’s happening with this? I really want this to be added.

I have been on record asking for this for quite a few YEARS.

I hope this releases soon. Blender really needs the plane transform boxes on the widgets. I want to just drag the plane box instead of pressing G, especially when I’m working in the top, left, right, etc. views in orthographic. When in those views, it’s impossible to hold shift while dragging the axis going in/out of the current view to drag on two axises.

xy axis are twisted in uv editor.

Haha, indeed! Was focused too much on getting it working, this must have slipped through somehow :S Will fix soon.

awesome thing for Blender to have!

Hmmm, does GSoC 2016 mean that custom widgets will be further into the future :-(

I guess thats good and bad. Good luck with the new project Julian!

Hello!

This branch is available for download and install?

The new 3d manipulator is very usefull

Thanks!

The best set manipulation in softimage. Press alt and auto snap to vertices, middle edge, middle polygons. Tweak tool, press middle mouse bottom on edge, select axis reference edge

That should be the way to implement VR to blender.

Great stuff!

Wording: Please use “manipulator” instead of “widget” (or use manipulatorWidget) and plan on dropping “custom” if think you will make everything “custom” in the end. :)

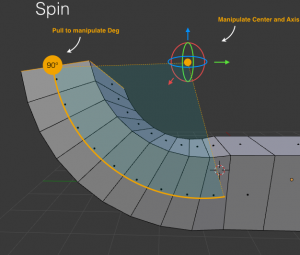

Can we have a way to show the numbers being modified “right in the view window” — instead of having to move your eyes to the side panel (often not even showing). Of course you can custom bake this into the manipulator code (like I see 90 in your picture), but I mean something more automatic/generic. Like semi-transparent list of key/value pairs appearing “nearby”.

Sketchup has a cool feature to be able to “type number(s) after the fact”. For precise stuff it is really great. Essentially you use the manipulator to “know” what you want to do, then you type the number so it is exactly, say “30” degrees.

Not sure how to do this but I think it should be baked into your solution.

Would a plugin be able to “augment/extend” or “replace” an existing manipulator or a portion of it?

Would any of this ease implementing (for example) a “ViewCube” (Maya). Not associated with an object but rather the view itself.

Your picture contains text and arrows that describes what these are for. See how this was very useful to explain the widget use. Can we have this concept within the software as a form of interactive help on any custom manipulator – like it would popup the first time with a “got it” button OR would be summoned automatically if your mouse keeps steady on a manipulator (like a semi-transparent tooltip that you can click to get more info – could jump into the documentation page). This is popular in IDEs.

Hi

I was looking for a test-build for this branch at GraphicAll.org, but it does not exist, under either name: “widget” or “custom manipulator”.

I also tried to build it from here:https://builder.blender.org/builders

But the drop-down list of branches does not include any of the branches to watch in 2016.

This sounds great!

I hope you might do this for textures as well, like in Maya: put on a texture on some flat faces of a model (or have a standard projection for a cube, or a sphere) and adjust it in the 3D viewport, like you would adjust a 3D object via x,y,z + rotation and size. That way one doesn’t need to put seams on and manually unwrap a model every time for simple standard objects (like cylinder, cube, sphere) or relatively flat sides (Faces) of objects. Or is such a thing already in Blender?

Does anybody know where I can get a build of this for Windows? (32 or 64)

thanks

This is awesome!!! We really need that.

I hope there will be a custom Manipulator for the Pivotpoint, because if you want to transform it now freely it’s too complicated. And thanks for your hard work .

When will this be integrated into the master branch? Am looking forward to trying it out.

Just out of interest, when using the Cycles or Internal Render mode, will the UI widgets show over the top of it ( to allow the user to easy select and manipulate objects within that rendered scene / view )?

It’s hard to predict when a merge into master might happen, but I hope it will happen in the coming months.

I could imagine to have an option to show/hide widgets for rendered views. Not a big deal :).

Having the manipulation UI over the rendered view would be a huge win – many users are probably using Cycles in Rendered view, and either have another window to manipulate the scene or toggle between rendered and non-rendered view. Being able to manipulate and see the resulting re-render from the same scene would be awesome!

Can’t wait to try it out! Would also be cool to see more animations of the new widgets in practice ( post some animating gifs to #b3d twitter, to build up a buzz )

Not sure this is within the scope of this section, but construction lines/snapping along with a decent inference engine wouldn’t go amiss

– for reference see Sketchup’s inference engine and construction line implementation.

The rotate manipulator is old version, not industry standard like Maya version ( trackball)…

Blender: mouse selection between coloured circles IS NOT possible for rotation.

Maya: mouse selection between coloured circles IS possible for rotation. Maya has virtual trackball.

Blender has double press of rotation shortcut, but it is not the same as Maya virtual trackbal.

Please check it:

https://www.youtube.com/watch?v=tn1Yxwtqepc

3:40 – virtual trackball

I like this. I could see rotational widgets that envelope the object rather than the standard widget; I would love to see the measuring add-on be trunked and have the numbers of each access be what makes a box bigger or smaller in size. It also could be useful to double as a scale tool.

I don’t know python, otherwise I’d develop those myself.

If someone can get his hands on an old copy of Caligari’s trueSpace software, it could prove very useful to study. By the end they were cultivating an extremely widget-centric user interface with a lot of context-sensitive customization, for various tasks from simple object moving/rotating/scaling to point/edge/face editing, extruding, lathing, etc. In many ways, trueSpace was far ahead of its time, and it would be satisfying if some of what Caligari had learned regarding interface design could find its way into Blender.

This is greatt!

I hope you will also make better rotation manipulators. With actual ones you cannot For example see gimbal lock. They are visualised as axes when rotating instead of circles which is really wierd.

Thank you very much!

http://code.blender.org/wp-content/uploads/2015/09/widgets_07.png

i waited for ages for this so finally you start getting things right.

This sounds really exciting. Blender already has wonderful functionality, the proposed gizmos will help make that accessible to more users. Let the artist stay in their Right brain as they work.

One suggestion: The Rotate, Scale and Translate gizmos should appear when you press G R or S.

I feel that forcing the user to think of the abstract concept of X,Y,Z planes e.g. (press G + Z to translate up/down) forces the mind to switch into logical Left brain thinking and is disruptive to the artistic flow(right brain), and especially difficult for beginners.

Best of luck with the project I think its really important.

Just to be completely clear my suggestion is: When manipulator gizmos are toggled on, Pressing G,R,or Z. Should make the appropriate gizmo appear.

This way it would not interfere with current functionality.I am not suggesting removing the current Grab Scale Rotate method. Just making it easier to access the manipulators that are already there. Thank you.

I’m a little puzzled how this would work. Blender has so many options and capabilities that it will be difficult to make widgets that cover just a few of its manipulations. Also, I’m wondering if only a small subset is covered by the widgets how useful are they, really? And can they be made intuitive? For the spot size example, the manipulator for pulling on an arrow doesn’t seem obvious.

All in all, a great project, with great challenges. I’ll be following this with interest.

Sliders could also work very well. For example, the approach that Houdini has to sliders works very well and lets you adjust values in a very fast and precise way, just with the mouse.

Are you referring to Houdini’s value ladders? I made a patch for a Blender version of them some time ago (https://developer.blender.org/D760), but it’s currently on hold because of design issues.

Wow, after using Houdini I’ve been wishing for this feature in Buzz for quite a while…I really hope this happens eventually.

lol, stupid phone auto complete!

Buzz = Blender.

Exactly. Nice one.

Very important project, which can put Blender in a higher level, attracting A LOT of new users. And, as we know, new users means more contributors, more consumers for all Blender products etc. Ie the Blender community will become even stronger.

I have always thought that the constraints that have minimum and maximum values would benefit from a system like this as it can be very unclear in a complex rig where these values are in 3D space. Same goes for the ‘Simple Deform’ modifier with it’s ‘angle’ and ‘limits’ properties. Would be much more natural to manipulate these properties in the 3D space.

Viewport scrubing in the timeline. Maya does this by holding down a b of key.

I know that this is not a visual under the cursor widget, but its functionality is still under the cursor in the behaviour. This prevents you from having to jump from viewport to viewport animating you can just simply scrub the viewport an animation will update

Agreed, I spent some time trying to find a way to mimic this in Blender, but to no avail. Would be really nice being able to tweak any value just by scrubbing from an editor using a hotkey.

I’m not sure what you guys mean exactly with ‘viewport scrubbing’? If you mean being able to scrub through frames from the viewport, then this is similar to the alt+mousewheel behavior in Blender

(It also doesn’t seem really widget-related, more like a general feature)

True, there is alt+wheel – however I am one of many who uses a tablet as primary input device (can’t use mouse for any longer than one straight hour because injury) and the wheel is not readily available – some tablets don’t even have one. This is why I am longing for a generic “scrub” event that works in the viewport.

Well using widgets to tweak values sounds cool and all, just make sure we`ll still have numerical input possible.

Stuff like forcefield-strength may at times need extreme values in arbitrary corner-cases.

I don`t wanna be left with continuous-grab-dragging a widget infiniteley in such instances.

Also one may need to set such stuff to the exact value x. Here again you need the possibility to just type in x.

Though I`m sure you`re well aware anyway.

Yes, of course, existing input possibilities should be kept. Either, by keeping the button the widget represents, or – and I prefer this – by allowing widgets to use numerical input and precision dragging.

I favor simplify the user interface but the big problem is the buttons philosophy is so entrenched in software in general that aunica immediate exit I see is to provide the user’s option to choose their personal interface, as already occurs with the themes / interface color presets. For example, I prefer the 2.4x scheme because it is simple and elegant, unlike the gray pradrão cloudy.

Anyway, I think experimentções need to be done in order to test the acceptance of the community.

Yay! Manipulator widgets are super important to work nicely!!

I really love that there is a trend of making everything possible right under the cursor! But also don’t forget invisible handles so you don’t always need to fiddle with the tiny little arrows but can use the whole screen with the right key combination.

I am especially waiting for a gizmo to move keyframes around in the graph editor & dopesheet, not to mention animating transforms in the compositor like Sean said.

That would be very useful for my work, keep it up!

As a character animator the Face Map Widget most excites me. Being able to rotate an arm or finger just by moving my mouse over the item to select would be brilliant!

I prefer functionality from Maya ( industry standard). Especially rotate manipulator ( like trackball) and waiting some years for your work! NICE! I am very happy that you have a time to prepare it. THNX.

Great! I’m really hoping this helps make the compositor a bit more friendly to animate 2D things in, as well!

Amazing, this is so exciting. :D

Just one thing, an old problem that I have is when change Blender to work with the left mouse button, when the handler stands in front of vertices, I can’t select them… hope this is resolved also :3 :D

With the current transform manipulator you can get that to work by doing some edits to the keymap configuration: Set the manipulator operator (view3d.manipulator) to map type ‘Tweak’ and event value of the selection operator (view3d.select) to ‘Click’.

This won’t work yet in the wiggly-widgets branch, but I plan to make it work ;)

Very nice, I am looking forward to this!

This is nice, been stalking related commits. :)

In order to prevent spam, comments are closed 7 days after the post is published. Feel free to continue the conversation on the forums.