Many visual effects commonly implemented with particles are using systems which continuously “grow” and/or “shrink”, be it rain drops falling from clouds, dust kicked up from the group or abstract fluid particles flowing in and out of a simulation domain. New particles are emitted and “dead” particles are removed from the simulation every frame. This is different from other commonly used systems such as meshes, which are mostly static in nature.

The way particles are currently implemented in Blender does not work nicely for such particle systems. The only way one can modify the amount of particles currently in the simulation is by choosing a very high overall particle amount and then gradually activate particles over a range of frames. The rate with which particles are emitted is not directly controllable, one has to choose start and end frames for emission and the particle amount based on a single fixed rate. In the worst case the amount of particles can be multiple times bigger than the number of particles visible in the simulation at any time, leading to a lot of wasted memory and preventing simulations with high emission rates over any longer period.

The reason for all these limitations lies in the simplistic way the underlying particle data buffers are allocated: The main particle buffer, as well as child particles and all additional data (boids, hair, etc.) are fixed-size, monolithic buffers. With this kind of buffer allocation a fixed “amount” value is the only viable method. Determining the necessary size of the buffer when the emission rate is animated over time would require a mathematical integration, which would lead to all sorts of accuracy problems.

Several months ago Janne Karhu, coder and maintainer of the particle code, and i came up with an idea for fixing this problem: Instead of one contiguous buffer large enough for all particles ever used in a simulation, the particle data would be allocated in smaller chunks or “pages”. Whenever there is not enough room (i.e. unused particle memory) in the existing pages, a new page is allocated and added to the end of the list. Even better: pages that contain only “dead” particles (aka “dead pages”) can be removed from the simulation again, thereby reducing memory requirements dramatically in most cases!

The basic implementation of paged buffers is now finished and most of the particle code has been converted to make use of it. You can find the code in a separate project repository hosted on http://www.gitorious.org/ under http://www.gitorious.org/blender-paged-particles.

As the rest of this blog post will be pretty dry and technical, i will just show you a quick screencast of the latest results. For this little demo i used two text objects to display the total emission count at the top and the number of currently allocated particles at the bottom. You can see from the bottom number that even after emitting hundreds of thousands of particles and even with extremely high emission rates the amount of allocated memory stays reasonably small when particle lifetimes are limited.

Small screencast demo of animated emission rates

Growing

Extending a paged buffer is quite simple and very efficient compared to the current method of changing the particle amount. In general the number of particles in a specific frame will not be a perfect multiple of the fixed page size, which means that the last buffer page is usually not completely filled. When the buffer should be extended by a number of particles, this last page is first filled up. If the unused space available on the last page is insufficient, the required new pages are added to the buffer.

Extending a paged buffer is quite simple and very efficient compared to the current method of changing the particle amount. In general the number of particles in a specific frame will not be a perfect multiple of the fixed page size, which means that the last buffer page is usually not completely filled. When the buffer should be extended by a number of particles, this last page is first filled up. If the unused space available on the last page is insufficient, the required new pages are added to the buffer.

Shrinking

Freeing memory space from the buffer that is not used any more can be done in two different ways, each with it’s own pros and cons:

- Free Dead Pages:

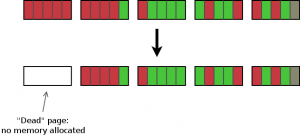

As soon as all particles on a specific page are dead, the page’s data buffer can be freed as a whole. This way a large amount of data can be freed during a simulation without having to modify any of the remaining pages.

As soon as all particles on a specific page are dead, the page’s data buffer can be freed as a whole. This way a large amount of data can be freed during a simulation without having to modify any of the remaining pages.

There is a prerequisite for this method to work effectively: The particle lifetime must be limited. If particles have strongly varying lifetimes, the likely result is that active particles are scattered across all pages in the buffer. Since pages can only be freed when all particles on them are dead, this means that many pages with only few active particles still waste a lot of memory. However, for most situations where particles are emitted continuously and lifetimes are limited this method is effective and has very good performance. - Compressing:

The second method for freeing memory consists in actually moving data in the buffer to fill up the gaps left by dead particles. This ensures that after the compression the buffer does not contain dead particles any more and takes up as little memory as possible. The disadvantage is that potentially a lot of data has to be copied around, up to a full copy of the buffer. Even then this method is better than the current way of doing a full buffer copy, because due to the partitioning the maximal amount of additional allocated data during compression is one single page!

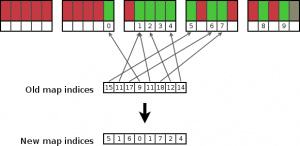

There is another problem that has to be considered: Because with this method the particles in the buffer are moved around, the indices used to directly address buffer elements will change. This has to be taken into account by any other data that references the particles. A typical example would be child particles, which store their parent particle’s index to quickly access the main particle buffer. In order to give the caller a chance to react to changing particle indices, the compression is done in 3 steps:- First a temporary data layer is added to the buffer, which stores the new element indices for each particle (see the Data Layers section for more info on data layers).

- Then a callback function is used in which the buffer owner has the chance to update any potential references to the new indices.

- After the indices are updated the elements can finally be moved and the index layer removed again.

- First a temporary data layer is added to the buffer, which stores the new element indices for each particle (see the Data Layers section for more info on data layers).

Which one of these cleanup functions is called and when depends on the type of particle system used and the current state of the buffer. A possible approach would be to free the dead pages frequently (i.e. after each frame update) and only use the buffer compression when the amount of wasted memory becomes critical. This can easily be done by comparing the allocated buffer elements to the number of active elements and define a threshold for compression, e.g. 50% dead particles.

Data Layers

In order to make particle systems more extensible and future proof we need a system of storing arbitrary types of data associated to particles. Also we need to be able to add and remove data to/from particles without a central struct that stores pointers to each and every possible piece of data, as it currently is the case in particle systems: the central “ParticleData” struct has pointers to hair, boids, etc. just to be able to quickly access this data, each additional feature increases the particle data size! Storing user-defined properties for particles is currently not possible, but it is crucial to the ability to define customized simulations.

Other parts of Blender, notably meshes, already have systems for “custom data layers” in place. A similar concept has been integrated into the paged particle buffers now. This allows particles to store optional data for features such as boids or hair in independent data layers, so the core ParticleData struct is not cluttered up and increased in size by redundant data pointers.

Each of the pages in the buffer allocates a data array for each of the layers of the buffer. By using small pointer arrays, access to the actual data arrays can be kept fast. All this internal management logic is hidden from the user (see the Buffer Access section).

Buffer Access

The current simple particle buffers are mostly accessed in C code by plain pointer arithmetic, adding the particle index to the base buffer pointer. This simple way of accessing C arrays does not work with paged buffers obviously. So now two new methods have been implemented for easy access to the particle buffers:

- Paged buffer iterators:

This is the preferred method when accessing all particles in the buffer (or a subset). Iterators have a skip function callback, which allows users to restrict to a subset, e.g. all visible particles. The iterator efficiently jumps from page to page and automatically takes care of dead pages. - Direct access by index:

This is useful when single or unordered (random) access is needed, e.g. when looking up parent particles. It is slightly less efficient than iterators when accessing large particle amounts, but better suited for access by index.

What happened to this…?

Hello,

I dont know if this can be useful, or maybe you have particles implemented this way or similar already, but the flyweight design pattern can be interesting:

http://en.wikipedia.org/wiki/Flyweight_pattern

Regards.

Sensible programming going one here :-)

Would it be possible/easy for dead pages to be recycled rather than freed?

Very nice feature :D, but i was thinking, aren’t there a way to predict when (in frames) the number of dead particles will be above a limit to force the compression/free dead pages? This could be done on the bake process, the reason for that would be to avoid alot of compression/free dead pages (which i guess are alot processor intensive big particles systens) where they aren’t needed. Sorry for any english error :P

Nice! So, that allows dynamic particle emission and is also more efficient when most of the particles are dead/unborn per frame? Can’t wait for it to be merged into trunk!

Hopefully it allows me to do a new rain test with over 10 million particles! :D

I’ve been waiting so long – now Blender particles are finally ripe thanks to you!

Interesting. I was trying to pick apart in my brain how particles worked in a few other systems I had encountered recently — in particular Little Big Planet 2. It seems they’re doing something sort of similar to that? By implementing it, there’s also controls for how the garbage-collection system will behave. So…

lifetime is [infinity (zero)] or [something > zero]

maximum visible is [something >= zero]

then you’ve got some options to set rules for what happens when you reach your maximum load.

when maximum reached [new particle replaces oldest] or [stop emitting]

It’s half of a good plan. The most optimized situation is where you’re trying to make something like a kitchen sink where the particles fluid can be killed when they reaches the drain. The least optimized situation would be if you wanted to fill the sink up completely. In the latter, no particles would ever be destroyed. That makes me wonder if it would be any more efficient at all??

In order to prevent spam, comments are closed 7 days after the post is published. Feel free to continue the conversation on the forums.