The work-in-progress proposal for the Blender 2.8 version plans for caching, nodes and physics is progressing steadily. If you are an experienced artists or coder or just want to have a sneak-peek, you can download a recent version from the link at the bottom.

Feedback is appreciated, especially if you have experience with pipelines and complex, multi-stage productions. The issues at hand are complex and different perspectives can often help.

Summary

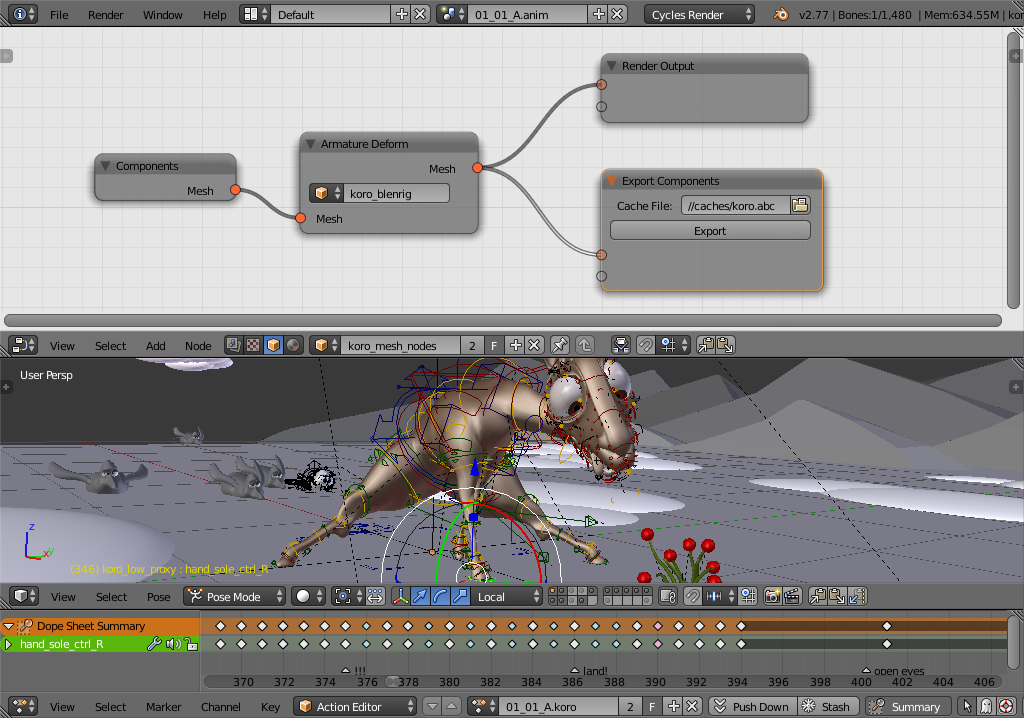

For the 2.8 development cycle of Blender some major advances are planned in the way animations, simulations and caches are connected by node systems.

It has become clear during past projects that the increased complexity of pipelines including Blender requires much better ways of exporting and importing data. Such use of external caches for data can help to integrate Blender into mixed pipelines with other software, but also simplify Blender-only pipelines by separating stages of production.

Nodes should become a much more universal tool for combining features in Blender. The limits of stack-based configurations have been reached in many areas such as modifiers and simulations. Nodes are much more flexible for tailoring tools to user needs, e.g. by creating groups, branches and interfaces.

Physical simulations in Blender need substantial work to become more usable in productions. Improved caching functionality and a solid node-based framework are important prerequisites. Physics simulations must become part of user tools for rigs and mesh editing, rather than abstract stand-alone concepts. Current systems for fluid and smoke simulation in particular should be supplemented or replaced by more modern techniques which have been developed in recent years.

Current Version

The proposal is managed as a sphinx project. You can find a recent html output in the link below.

https://download.blender.org/institute/nodes-design/

If you are comfortable with using sphinx yourself, you can also download the project from its SVN repository (*):

svn co https://github.com/lukastoenne/proposal-2.8.git

Use make html in the proposal-2.8/trunk/source folder to generate html output.

(*) Yes, github actually supports svn repositories too …

Node Mockups

For the Python node scripters: The node mockups used in the proposal are all defined in a huge script file. Knock yourselves out!

https://github.com/lukastoenne/proposal-2.8/blob/master/blendfiles/object_nodes.py

Hey Lukas,

This isn’t really related to the article, but I was wondering when you plan to add UDIMs to Blender?

I’m just curious that’s all.

I like this proposal quite a bit. As a regular user of Animation Nodes, the suggestions Jacques made are great. However, I would encourage everyone to take a look at Softimage ICE Trees if you never have before. ICE trees in Softimage are very similar to the sort of access the user has in Houdini, in that you are able to access nearly all the relevant data for simulation, animation, rigging, particles, etc, for the entire scene.

These proposed features are great, but from the mockup I’ve seen so far, the user is missing out on some control. One of the largest benefits of AN (Animation Nodes) is the ability to specifically control and modify data within the node tree. If this system you are proposing is simply a connected tree of nodes, that doesn’t give the user any options for controlling specific parameters for simulations/hair/etc… within the node tree, I think this system will only add to the confusing workflow already in Blender.

I’d like to reiterate… PLEASE PLEASE look at ICE Trees in Softimage. link here: http://download.autodesk.com/global/docs/softimage2014/en_us/userguide/index.html

The nodes shown in that node tree have expandable option that show up in a Pop-Up menu. Creating a Properties menu for nodes should be up there in terms of priority for a system like this. If allowing for object/point cloud generation and control requires a DepsGraph update, it may be prudent for that fix to happen before Everything Nodes system gets introduced.

Being a pipeline TD I can guarantee you that blender will gain more use in large productions when it becomes node based. The ability to read and write data via nodes is a huge advantage when designing automated systems that need to scale.

A perfect example is how we use nuke in our pipeline. We produce lots of data from nuke and use a nuke file as a template. we fill in the read and write values and execute the script. (We actually do this to the ASCII file before submitting it to the farm).

Being able to do this with blender for FX and Hair work would be great.

Also, the way a node centric design forces developers to code is a lot nicer as well. Code doesn’t drift into areas that it shouldn’t and each operation is nicely contained.

Houdini and Katana are good reference points for node based workflows in the VFX world. Maya is terrible. Don’t do what they’ve done!

Cheers,

Jase

you have no idea how excited i am for this bit: “Current systems for fluid and smoke simulation in particular should be supplemented or replaced by more modern techniques which have been developed in recent years.”

:D

i’m really hoping we’ll be able to push out more believable large scale smoke and water sims. that was my biggest challenge with blender when i did some vfx for a history channel disaster docu-series a couple years ago.

> 1. FILE FORMAT

The problem of Houdini’s HIP file is the fact that it is a sequence of statements like: 1. create a node A 2. Create a node B 3. Wire node A to node B.

It would be way better if it was a functional (declarative) description of a network rather than a bunch of instructions of how to create a network.

> 2. CODE

As much as I love VEX, it has it’s own problems:

1. Unlike Python and C++ it is SIMD (I think it is better to say SIMDish because of the way it works now) which puts constraints on the programming model.

2. It is a data parallel language and the program (shader) is running on top of the data you give it. If there is enough data, VEX engine will spawn several threads. If there is not enough data (from VEX engine perspective) then you have a single thread task. Sometimes the input data can have just several points (for example in SOP context) and for every point a VEX code can do a lot of work. This would require as many threads as the points the input data has. But there is no way to tell VEX engine how we want to manage the threads. We stuck in the data parallel world similar to OpenCL / CUDA.

3. Sometimes we have to split a task into several VEX nodes due to the way VEX works.

I would rather vote for one language for scripting, HDKing and VEXing as well as expressioning instead of four.

A language that allows to write in a functional non SIMD way with ability to control threading and change a programming model on the fly easily in the same code (node).

We could write in Pythonic sequentional way and then call map / reduce (whatever) making the same code very efficient data parallel one in the same node.

i think the backend should be rethinked. when i use a 3d software i use mostly houdini and the concept for nodes is solid. but also they have old code history that doesn’t fit into todays technology concepts and here i find blender could get some attention.

1. FILE FORMAT

in houdini the file format(.hip) is recreated from the unix world in other word it’s a unix image file.

i see here blender could use git as a file format system(instead of .blend) so you can get versioning and code access(example python code).

2. CODE

in houdini they use llvm in there own language vex for most of the new nodes vex is a central part. the extrem part is there dynamic system is based on vex and it’s one of the fastest system.

it would be nice if blender could support more the llvm approach it’s even today in the base in it thanks to open shading language.

if you are developing today in blender most of the addons are python and that is not the fastest away. when you can script in clang it’s much easyer then code in c/c++.

sorry when i compare with other tools but i find just to have nodes is one thing the other is speed and i find python or c++ is not the ideal way.

Thank you Lukas for this project ! I was waiting for it for a long time. :)

I’ll read the whole documentation this week-end, but I have a question if you have enough time to reply :

I recently released a free addon to merge animated objects into only one animated mesh drove by a PC2 file. You can read more about it on my website (a quick video in the post can show you the goal of this addon) :

http://www.coyhot.com/blender-addon-amination-joiner/

My question is : can we think of a system for “grouping” animated objects without joining them, then creating a cache for those objects and finaly creating an instance of this group, pointing to the same cache (optionnaly streamed with a time offset) ?

Thank you for your time !

The “cache library” concept we developed during Cosmos Laundromat production is an attempt at solving this problem. The basic idea is to have a datablock that defines the details of a cache: file path(s), frame range, etc. Then each cacheable object would just point at the cache lib to be cached alongside other objects using the same cache. Doing this on the group level might also work, but using groups this way is difficult because there is really no place to actually access a group datablock as such, unless it is linked to an object.

During project Gooseberry we had too many other pipeline issues overlayed on top of the actual caching (described in the first chapter of the proposal). This lead to my convoluted (and frankly unusable) implementation. The cache lib suddenly was also is responsible for hair simulation and other features that have nothing to do with caching. These features were also redundant, since they had to be reimplemented as a separate system.

TL;DR: Yes, combined caches for groups are a necessary feature. I don’t have a definitive answer yet, fitting this into existing workflows is not an easy task.

Thank you for this really detailled reply Lukas, and good luck for this useful and ambitious project !

In fact what you are asking for is Alembic :)

Cheers.

Just some thoughts on the Data Model. From my perspective there a two types of basic on Blender. 1 dimensional and 2 dimensional. The first type is nowadays the less common. Those are data that don´t vary over time. For example Scene Names, which remain static. On the other hand we have 2 dimensional data which can vary trhough time, therefore can be animated. Maybe the scene level could be a third dimension but that doesn´t make much more sense as long as objects aren´t shared between the scenes. In my opninion, every design for a global node solution should take this into consideration.

On the other hand I believe that data should structured in layers. On the most bottom layer you will have the basic data types: ints, vectors, floats, text and even lists of them. Just pure data with no relation to any specific elements of the blend file.

On the second layer you will have properties which will be part of objects or elements of the scene, like location on objects, index on materials etc…

On the third layer you will have set of properties, like tha transform for objects or the velocity for particle systems. And so on.

All this wording comes from the idea that the user somehow should have the ability to spllit and join data structures in order to create higher level and lower level elements. As well as the ability to connect any piece of data from any part of blender to any other element. Taking care always of the data type compability. Just another thought.

In regards to “better ways of exporting data” I’ve had the requirement to control in more detail what gets exported where and to store those settings to quickly run a set of exports. So I’ve implemented a node tree that allows to chain filter-nodes to select objects from a scene and feed them into exporter-nodes. It’s explained here: http://harag-on-steam.github.io/se-blender/#customizing-the-export

I think the design could be further refined to be generally applicable for exporters.

Really excited. Have been looking forward to this since donating towards the Particle Nodes work in 2011. Can’t wait for test builds.

This might be more for UI dev down the line, and maybe I’m missing something, but I’d like to suggest considering the method of exposing parameters of compound nodes seen in Softimage and Fabric Engine, where mutable lists of, in our case, the input and output sockets of Group Nodes would reside at the left and right edges of the editor, and we could drag connectors from sockets to the edges to automatically expose parameters rather than having to instantiate (otherwise unnecessary?) Group Input and Group Output nodes. Making a network of nodes, selecting them and calling a “Group nodes” operator feels quicker and more natural than creating an empty Group, adding parameters, opening it, adding input and output nodes, etc.

What would be the best/official way to give feedback on this?

You can just write comments here.

Alright… I started a document with some pipeline integration thoughts a while back: https://docs.google.com/document/d/1_2f1Ami0lyWeciuMhpFZZb4MisITBigbqVVCHsGKJbQ/edit

The points most crucial for me right now (as I’m using Blender in production thanks to the great Alembic patch) is MultiOS-Path translation and UNC support (I can’t render comfortably on the farm right now) and a workgroup concept for plugins and settings (how to enable stuff like Animation-nodes or a 3rd-party renderer on the farm without a centralized plugin repository?).

Not directly in the matter of the discussion, sorry for that, but I’m desperate finding someone that can share a x64 windows build with the Alembic patch.

Can you build it?

Cheers and thanks!

Nope, sorry. Maybe you can throw in a couple of bucks and ask at blenderartists/paidWork

As I promised in the mailing list here are some of my ideas and first impressions about this proposal (sry that it isn’t as nicely structured as yours):

I like that you outlined typical workflows at first because they gave me a deeper understanding on what problems you’re trying to solve.

I’ve to admit that my knowledge about the movie creation process is limited to what I see and read, I’ve never did most of the stuff I mention here myself. The weeklies were very important for me to get a better understanding on how the process works. Thanks for that!

In my opinion the best way to ensure that no (less) ownership problems occure between the stages is to separate the data the individual artists are modifying.

So at first we have to which data is modified by who and then make the corresponding data types.

The modeler works on verts, edges, polygons and maybe uv coordinates. So the Mesh Object only needs these properties.

Next step is the rigging. From my understanding a rig is basicly a *collection of constrained deformers*. So maybe we can have a Rig Object that only stores the rig. The rig shouldn’t be directly bound to the mesh. Basicly there are two intermediate datatypes that need to be accessed by both: Vertex Weights and Shape Keys. Both have unique identifiers that can be referenced by the Rig. Also they aren’t a part of the mesh but they depend on it.

Now the animator comes into play. Essentially the animator creates only modifies the Rig -> A Pose is a set of changes to the default state of the Rig. There is a local copy of the mesh in the file to make the animators job easier, but the mesh is never stored to disk. This is when nodes can handy the first time. We could have a node that takes a Mesh, a Rig and a Pose object and outputs a new transformed Mesh.

The animators job is now to create animations (obviously). An animation is nothing more than sequence of poses. I can imagine a node that takes a Mesh, a Rig, an Animation and a frame number and outputs the transformed Mesh.

The hair specialist also gets his own data type which only contains a collection of Strands. He doesn’t modify the Mesh but he can use it as reference to know where to place the strands (similiar to what the Rigger and Animator does with the mesh).

Finally the Mesh, the Animation and the Strand Collection is imported into the final scene and joined into a single renderable object.

That were just some thoughts about how the data can be split so that everyone only works on his data -> no one has to modify the objects ‘owned’ by others. I know it’s fairly rough and might involve a LOT of refactoring, but I wanted to share it anyway. :)

I also like your solution to create a new local object that acts like any other object. But what happens when two artists append the same object and make copies of it? Later the changes have to be joined somehow, or not?

Purely state-less modifiers would be nice indeed! They should just take a Mesh and some settings and output a new Mesh. Something that can be more difficult then is to support parameters like the show_on_cage property of the Array modifier. Maybe these has to be removed (Without looking at the code, it feels a bit like this functionality is quite hacky anyway..)

Combining particle, simulation, animation and mesh nodes into one node tree would be very cool, but I’m still not quite sure how that could look like as they work in totally different ways. (I have a similiar problem in Animation Nodes. Simulation is just not supported (without hacks) because it’s a totally different execution model.)

I totally agree with all the points you made about the Generic Node Compiler! It’s pretty similiar to what I did with Animation Nodes. But there I convert the nodes into a python script which is slower of course.. But it’s definitly the best and most performant way!

Thanks for the feedback, Jacques!

The integration of simulation processes makes it necessary to view nodes as fairly high-level operations. Each node represents a part of the object’s general update process (managed by the depsgraph). Each node could be scheduled individually, with dependencies on other nodes given by the connections. Only part of an object’s deps-sub-graph would usually require evaluation, depending on what output data is required (e.g. rendering and viewport need different data).

Most nodes of an object could be scheduled en-bloc, like a typical modifier stack where they just pass data from one to the next. However, for simulations such as a rigid body sim some nodes may depend on an external node (such as the scene-level Bullet time step), and that external node can in turn depend on some of the object’s nodes (e.g. node defining the collision shape). This means that in general the object update needs to be broken up into several parts, which are then connected to the outside world (other objects and the scene).

I will go into this in more detail in the upcoming particles chapter, and you can find a glimpse of it in the “Fracturing” part.

This is great news, and I’m looking forward to it greatly. My last couple of projects have involved linked assets with soft body and cloth simulation with caches, and it was pretty tough going. And I love the move to a more node based paradigm for other areas too, such as modifiers. Looking forward to trying the branch on some old scenes if I can!

Could it be possible to cache to some kind of internal blender data structure, similar to materials etc?

I 100% agree with JORGE. It’s very hard to do motion graphics in Blender and a node based system could open up a new world of possibilities for Blender artists. As of now the only (ageing tbh) tool for motion graphics in Blender is the modifiers. Great news nevertheless!

Have you tried the animation nodes Blender add-on that Jorge mentioned? It’s pretty amazing!

Nice to see this proposal. I´ve just have made a quick read, and its a dense document that requires time. But one thing that I´ve noticed is that is very oriented to character animation and VFX.

Other types of uses as Motion Design hasn´t been considered. Nodes are heavily used for Motion Design in other applications as C4D and Houdini. On Blender we have the example of Animation Nodes, and addon that via pynodes brings an incredible toolset for Blender. I would like to see most of the functionality and flexibility that this tools offers for object instancing, mesh and curves manipulation and animation bring into the new Blender nodes.

In any case thanks for your hard work. The task is really hard to achieve and the expectations are really high.

In order to prevent spam, comments are closed 7 days after the post is published. Feel free to continue the conversation on the forums.